Why it matters

What it tends to unlock

Perception, mapping, detection, and safer motion decisions, cleaner autonomy loops when the robot needs environmental context, and higher-quality data for navigation, manipulation, or monitoring.

LiDAR appears across 22 tracked robots, concentrated in Humanoid, Commercial, and Cleaning. Use this page to understand why the signal matters, who relies on it most, and which live profiles deserve the first comparison click.

Tracked robots

22

Ready now

16

Manufacturers

19

Public prices

5

Why it matters

Perception, mapping, detection, and safer motion decisions, cleaner autonomy loops when the robot needs environmental context, and higher-quality data for navigation, manipulation, or monitoring.

What to verify

Coverage, placement, and how the sensor performs in messy conditions, what decisions actually rely on the sensor versus backup systems, and whether the label signals depth, proximity, or full-scene understanding.

Coverage

The heaviest concentration is in Humanoid (13), Commercial (3), and Cleaning (2). Top manufacturers include AGIBOT (2), LimX Dynamics (2), and Pudu Robotics (2).

Research brief

The useful questions here are how common LiDAR really is, which robot classes depend on it, and which live profiles are worth opening before you compare the whole stack.

Verified 30d

15

21 in the last 90 days

Top category

Humanoid

13 tracked robots

Paired most often with

Wi-Fi, IMU, and Bluetooth

Market snapshot

Category concentration, manufacturer repetition, and the strongest adjacent signals.

Dense inventory

Featured first clicks up top, then the full scannable robot table below.

Browse the full Sensor layer

Open the workbench when this one component is too narrow for the decision.

Compare the clearest profiles

Use the strongest ready-now matches as the fastest comparison anchor.

Decision brief

Where it helps most

What to validate

Evidence basis

Source pack

Use the structure first: which categories lean on LiDAR, which manufacturers repeat it, and what usually ships beside it.

Lead category

13 tracked robots currently anchor this label.

Most repeated manufacturer

2 tracked robots make this the clearest manufacturer-level signal on the route.

Most common adjacent signal

10 shared robots pair this component with Wi-Fi.

| # | Name | Usage |

|---|---|---|

| 1 | Humanoid | 13 robots |

| 2 | Commercial | 3 robots |

| 3 | Cleaning | 2 robots |

| 4 | Home Assistants | 2 robots |

| 5 | Research | 2 robots |

| # | Name | Usage |

|---|---|---|

| 1 | AGIBOT | 2 robots |

| 2 | LimX Dynamics | 2 robots |

| 3 | Pudu Robotics | 2 robots |

| 4 | Agile Robots | 1 robot |

| 5 | Agility | 1 robot |

| 6 | Boston Dynamics | 1 robot |

How to read the market

Category concentration tells you where the component is actually doing work, manufacturer repetition shows whether the signal is market-wide or vendor-specific, and pairings reveal which neighboring technologies usually ship alongside it.

The old card wall is replaced with a featured first-click strip and a dense inventory table so the route behaves like a serious directory.

Directory briefing

Open the clearest profiles first, then sweep the full inventory in a denser table. Featured cards are selected by readiness, image quality, and official source availability, so the first click is usually the most informative one.

Ready now

16

Public price

5

Official links

22

Featured now

3

How to scan this directory

Best first clicks

These robots score highest on readiness, public detail quality, and image clarity, making them the fastest way to understand how LiDAR shows up in practice.

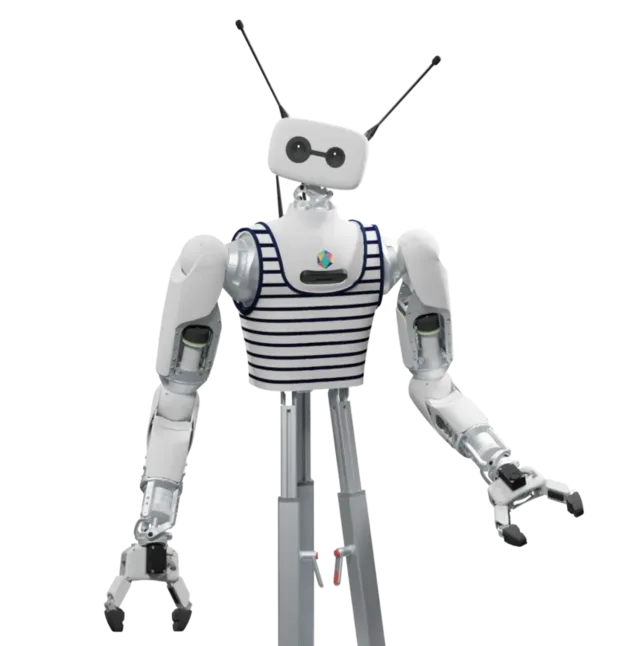

An open-source humanoid robot built by French company Pollen Robotics for research in manipulation, human-robot interaction, and embodied AI. Features two 7-DoF bio-inspired arms, a 3-DoF expressive head, and an omnidirectional mobile base with lidar. Partnered with Hugging Face on their LeRobot open-source robotics initiative. Fully open-source with ROS 2 support and a Python SDK. Designed for researchers, developers, and robotics enthusiasts who want a customizable platform. In April 2025, Pollen Robotics was acquired by Hugging Face, which plans to fully open-source both hardware and software.

Public price

$70,000

Official Hugging Face/Pollen Robotics…

Battery

8 hours (mobile base, per official hardware docs)

Shortlist read

Active in the catalog with enough detail to review immediately.

LimX Dynamics' full-size humanoid robot with advanced loco-manipulation capabilities. Powered by the COSA (Cognitive OS of Agents) agentic operating system, Oli is the first humanoid to combine whole-body motion control with high-level autonomous cognition — thinking while acting in real environments. Can navigate construction debris, sand, rocks, and uneven terrain. Features OTA-updatable motion libraries and supports major simulation platforms. LimX Dynamics raised $200M in Series B funding.

Public price

Price TBA

No public price; official LimX order…

Battery

About 2h (lab power-test room; actual data may vary)

Shortlist read

Shipping now; pricing still needs vendor confirmation.

MiPA (My intelligent Personal Assistant) is NEURA Robotics' cognitive household and service robot for private homes, care, hospitality, retail, healthcare, and workplace support. Official product and reservation pages position it as a smart personal assistant that can transport items, serve, guide, interact, and support daily routines through modular attachments such as a backpack, shelf, table, hook, clip system, and tool-change modules. NEURA's Automatica 2025 launch described MiPA as the market launch of a cognitive household and service robot with an open platform, Neuraverse skills, and partner hardware or IoT integrations; the current reservation page lists MiPA Home at €9,999 with a refundable €100 reservation fee. Verified official specs include 16 degrees of freedom for the base robot, 2-8 hours of motion endurance, SLAM/LiDAR and AI-driven planning for autonomous mobility, 360° perception, person recognition up to three meters, environmental sensors, multimodal touch/display/microphone/speaker/LED/projector interaction, Wi-Fi and Bluetooth, and automatic recharging. Exact height, weight, payload, charging time, delivery regions, and production shipment status are not publicly confirmed.

Public price

€9.999

Official MiPA reservation page lists…

Battery

2-8 hours motion endurance (official datasheet)

Shortlist read

Commercial intent is clear, but delivery timing should be validated.

Compact mobile scan: status, price, standout context, and links stay visible without sideways scrolling.

Shark · Cleaning

Price

$950

Standout

Battery · 3+ hours (NeverStop Battery)

Zeroth Robotics · Home Assistants

Price

$4,999

Standout

Battery · Up to 25 hours standby

AGIBOT · Humanoid

Price

Price TBA

Standout

Battery · 2 hours (700 Wh swappable battery)

AGIBOT · Humanoid

Price

Price TBA

Standout

Payload · 3 kg continuous one-arm handling

LimX Dynamics · Humanoid

Price

Price TBA

Standout

Battery · About 2h (lab power-test room; actual data may vary)

isento robotics GmbH · Humanoid

Price

Price TBA

Standout

Payload · Official page says higher payload than the maker-focused pib platform but does not publish a payload rating; Humanoid.Guide lists 4 kg strength on an unverified profile

Pollen Robotics · Research

Price

$70,000

Standout

Battery · 8 hours (mobile base, per official hardware docs)

Pudu Robotics · Commercial

Price

Price TBA

Standout

Battery · 13 hours (no load)

Agility · Humanoid

Price

Price TBA

Standout

Battery · ~4 hours

DEEPRobotics · Humanoid

Price

Price TBA

Standout

Payload · Up to 20 kg total payload reported by launch coverage; official product page does not list a payload spec

Italian Institute of Technology · Humanoid

Price

Price TBA

Standout

Payload · ~10 kg (collaborative load)

Pudu Robotics · Commercial

Price

Price TBA

Standout

Battery · Up to 8 hours (no-load)

Oversonic Robotics · Humanoid

Price

Price TBA

Standout

Battery · Up to 8 hours

Boston Dynamics · Commercial

Price

Price TBA

Standout

Battery · Up to 16 hours (two full shifts)

UBTECH · Humanoid

Price

Price TBA

Standout

Size · 170cm

Zerith Robotics · Cleaning

Price

Price TBA

Standout

Battery · 4 hours

NEURA Robotics · Home Assistants

Price

€9.999

Standout

Battery · 2-8 hours motion endurance (official datasheet)

XPENG Robotics · Humanoid

Price

$150,000

Standout

Battery · 4 hours active use

Agile Robots · Humanoid

Price

Price TBA

Standout

Size · 174cm

KAIST · Research

Price

Price TBA

Standout

Battery · ~60 min (task-dependent)

Kawasaki Heavy Industries · Humanoid

Price

Price TBA

Standout

Max speed · ~4km/h

LimX Dynamics · Humanoid

Price

Price TBA

Standout

Battery · 5 hours per charge (Humanoid.Guide; not manufacturer-verified)

Sorted by readiness first so live, scannable profiles do not get buried under the long tail.

| Robot | Status | Price | Link |

|---|---|---|---|

PowerDetect UV Reveal 2-In-1 Shark · Cleaning |

Available | $950 | Official |

W1 Zeroth Robotics · Home Assistants |

Available | $4,999 | Official |

A2 AGIBOT · Humanoid |

Available | Price TBA | Official |

G1 AGIBOT · Humanoid |

Available | Price TBA | Official |

Oli LimX Dynamics · Humanoid |

Available | Price TBA | Official |

pib.Pro isento robotics GmbH · Humanoid |

Available | Price TBA | Official |

Reachy 2 Pollen Robotics · Research |

Active | $70,000 | Official |

BellaBot Pudu Robotics · Commercial |

Active | Price TBA | Official |

Digit Agility · Humanoid |

Active | Price TBA | Official |

DR02 DEEPRobotics · Humanoid |

Active | Price TBA | Official |

ergoCub Italian Institute of Technology · Humanoid |

Active | Price TBA | Official |

PUDU FlashBot Arm Pudu Robotics · Commercial |

Active | Price TBA | Official |

RoBee R Oversonic Robotics · Humanoid |

Active | Price TBA | Official |

Stretch Boston Dynamics · Commercial |

Active | Price TBA | Official |

Walker S UBTECH · Humanoid |

Active | Price TBA | Official |

ZERITH H1 Zerith Robotics · Cleaning |

Active | Price TBA | Official |

MiPA NEURA Robotics · Home Assistants |

Pre-order | €9.999 | Official |

Iron XPENG Robotics · Humanoid |

Development | $150,000 | Official |

Agile ONE Agile Robots · Humanoid |

Development | Price TBA | Official |

DRC-HUBO+ KAIST · Research |

Prototype | Price TBA | Official |

Kaleido 9 Kawasaki Heavy Industries · Humanoid |

Prototype | Price TBA | Official |

Luna LimX Dynamics · Humanoid |

Prototype | Price TBA | Official |

Quick answers

The short version of what this label means in the ui44 catalog, where it matters, and how to compare it without over-reading the marketing copy.

LiDAR currently appears on 22 tracked robots across 19 manufacturers. That makes this route useful for both deep research and fast shortlist scanning, not just one-off editorial reading.

The strongest concentration is in Humanoid (13), Commercial (3), and Cleaning (2). Category mix is the fastest clue for whether this component behaves like baseline plumbing or a more selective differentiator.

16 of the 22 tracked profiles are currently marked Available or Active. That means the label has live market relevance here, but you should still open the profiles with public pricing or official links first before treating it as a clean buyer signal.

Start with readiness, official source quality, and the standout spec column in the inventory table. On component routes, those three signals usually remove weak profiles faster than reading every descriptive paragraph.

The strongest shared-stack signals here are Wi-Fi (10), IMU (8), and Bluetooth (6). Use those pairings to branch into adjacent component pages when one label is too narrow for the decision.

5 matching robots currently expose public pricing. That is enough to create directional context, but not enough to treat one price bracket as the whole market. Use the directory to find the transparent profiles first, then widen the sweep.

Start with AGIBOT (2), LimX Dynamics (2), and Pudu Robotics (2). Repetition across manufacturers is often the clearest signal that the component is part of a stable market pattern rather than a one-off marketing callout.

The original long-form component research is still here, but collapsed so the main route can prioritize hierarchy and scan speed.

The baseline explanation of what LiDAR is, why it matters, and how to think about it before comparing implementations.

LiDAR is a sensor component found in 22 robots tracked in the ui44 Home Robot Database. As a sensor technology, LiDAR plays a specific role in enabling robot perception, interaction, or operation depending on its implementation in each platform.

Component Type

Used By

22 robots

Manufacturers

AGIBOT, Agile Robots, Pudu Robotics +16 more

Categories

Humanoid, Commercial, Research +2 more

Price Range

$949.99 – $150k

Available Now

16 robots

Sensors are the perceptual backbone of any robot. They convert physical phenomena — light, sound, distance, motion, temperature — into digital signals that the robot's AI can process and act upon.

In the ui44 database, LiDAR is categorized under Sensor components. For a comprehensive explanation of all component types, consult the components glossary.

The sensor suite is one of the most important differentiators between robots. Robots with richer sensor arrays can navigate more complex environments, avoid obstacles more reliably, and perform more nuanced tasks.

Directly impacts what a robot can actually do in practice — not just on paper

Richer sensor arrays enable more complex navigation and interaction

Determines obstacle avoidance reliability and object/person recognition

Used in 22 robots across 5 categories (Humanoid, Commercial, Research, Home Assistants…), indicating broad applicability across the robotics industry.

Modern robot sensors work by emitting or detecting various forms of energy. The robot's processor fuses data from multiple sensors simultaneously (sensor fusion) to build a coherent understanding of its surroundings.

Active sensors

LiDAR and ultrasonic emit signals and measure reflections to determine distance and shape

Passive sensors

Cameras and microphones detect ambient light and sound without emitting anything

Sensor fusion

The processor combines data from all sensors simultaneously for a coherent environmental picture

LiDAR Integration

Implementation varies by robot platform and manufacturer. Each robot integrates LiDAR differently depending on system architecture, use case, and target tasks. Integration with other onboard sensors and the main processing unit determines real-world performance.

Deeper technical framing, matched technology profiles, and the longer use-case treatment for LiDAR.

In-depth technical analysis of 1 technology domain relevant to this component

While the sections above cover general sensor principles, this analysis focuses on the particular technology domains relevant to LiDAR based on its implementation characteristics.

LiDAR (Light Detection and Ranging) and time-of-flight sensors measure distances by emitting light pulses and measuring the time they take to reflect back from surfaces. This principle enables precise, three-dimensional mapping of the robot's environment regardless of ambient lighting conditions — a significant advantage over camera-only systems that struggle in darkness or strong direct sunlight. In home robotics, LiDAR has become the gold standard for floor plan mapping and systematic navigation.

Two main LiDAR architectures exist in consumer robotics. Mechanical spinning LiDAR uses a rotating mirror or emitter assembly to sweep a laser beam 360° around the robot, building a complete horizontal distance profile with each revolution. This technology is proven and reliable but involves moving parts that can wear over time. Solid-state LiDAR eliminates moving components by using arrays of emitters and detectors, or MEMS (micro-electromechanical) mirrors, to steer the beam electronically. Solid-state designs are more compact, potentially more durable, and increasingly cost-effective, though they may have slightly different field-of-view characteristics than spinning units.

Time-of-flight sensors used in robotics typically operate with infrared laser diodes at wavelengths around 850-940 nm, which are invisible to the human eye. Consumer robots universally use Class 1 eye-safe lasers, meaning the beam intensity is low enough to be safe even with direct eye exposure. The precision of these sensors — typically 1-3 cm at ranges up to 12 meters for consumer-grade units — enables robots to build room maps accurate enough for efficient navigation and furniture avoidance. More advanced implementations combine LiDAR distance data with camera imagery in a process called sensor fusion, creating rich 3D environmental models that combine the geometric precision of LiDAR with the semantic richness of visual data.

Beyond the high-level overview, understanding the technical foundations of sensor technologies like LiDAR helps buyers and researchers evaluate implementations more critically.

Every sensor converts a physical quantity into an electrical signal that can be digitized and processed. The raw analog output is conditioned through amplification, filtering, and A/D conversion before reaching the processor.

Sensor performance involves key metrics with inherent engineering trade-offs.

Sensor technology in robotics has evolved dramatically over the past decade.

Early home robots relied on simple bump sensors and infrared proximity detectors

Today's platforms incorporate multi-spectral cameras, solid-state LiDAR, and millimeter-wave radar

Miniaturization: sensors that filled circuit boards now fit into fingernail-sized packages

Next frontier: sensor fusion at the hardware level — multiple sensing modalities in single chip-scale packages

No sensor is perfect in all conditions. Understanding limitations is critical for evaluating robots in specific environments.

Key application domains for sensor technologies like LiDAR.

Sensors enable robots to build maps of their environment, detect obstacles in real time, and plan collision-free paths. This is essential for both indoor robots (navigating furniture and doorways) and outdoor robots (handling terrain variations and weather conditions). The quality and coverage of the sensor array directly determines how reliably a robot can navigate without human intervention.

Advanced sensors allow robots to identify objects by shape, color, and texture, enabling tasks like picking up items, sorting packages, or recognizing faces. Depth-sensing technologies are particularly important for calculating object distances and sizes, which is necessary for precise manipulation in both home and industrial settings.

In environments shared with humans, sensors provide the critical safety layer that prevents robots from causing harm. Proximity sensors, bumper sensors, and vision systems work together to detect people and obstacles, triggering immediate stop or avoidance maneuvers. This is a fundamental requirement for any robot operating in homes, hospitals, or public spaces.

Sensors can measure temperature, humidity, air quality, and other environmental parameters. Robots equipped with these sensors can perform automated monitoring rounds in warehouses, data centers, or homes, alerting users to abnormal conditions like water leaks, temperature spikes, or poor air quality.

Microphones, cameras, and touch sensors enable natural interaction between robots and humans. These sensors allow robots to recognize voice commands, detect gestures, respond to touch, and maintain appropriate social distances during conversations or collaborative tasks.

Visit each robot's detail page to see which capabilities are available on specific models.

Manufacturer mix, specs context, price context, category overlap, and adjacent components worth branching into next.

LiDAR is used by 19 manufacturers — showing how widely this technology is deployed across the industry.

| Manufacturer | Models |

|---|---|

| AGIBOT | 2 robots |

| Pudu Robotics | 2 robots |

| LimX Dynamics | 2 robots |

| Agile Robots | 1 robot |

| Agility | 1 robot |

| DEEPRobotics | 1 robot |

| KAIST | 1 robot |

| Italian Institute of Technology | 1 robot |

| XPENG Robotics | 1 robot |

| Kawasaki Heavy Industries | 1 robot |

| NEURA Robotics | 1 robot |

| isento robotics GmbH | 1 robot |

| Shark | 1 robot |

| Pollen Robotics | 1 robot |

| Oversonic Robotics | 1 robot |

| Boston Dynamics | 1 robot |

| Zeroth Robotics | 1 robot |

| UBTECH | 1 robot |

| Zerith Robotics | 1 robot |

Side-by-side comparison of all 22 robots using LiDAR.

| Robot | Price | Status |

|---|---|---|

| A2 | — | Available |

| Agile ONE | — | Development |

| BellaBot | — | Active |

| Digit | — | Active |

| DR02 | — | Active |

| DRC-HUBO+ | — | Prototype |

| ergoCub | — | Active |

| G1 | — | Available |

| Iron | $150k | Development |

| Kaleido 9 | — | Prototype |

| Luna | — | Prototype |

| MiPA | $10.0k | Pre-order |

| Oli | — | Available |

| pib.Pro | — | Available |

| PowerDetect UV Reveal 2-In-1 | $949.99 | Available |

| PUDU FlashBot Arm | — | Active |

| Reachy 2 | $70k | Active |

| RoBee R | — | Active |

| Stretch | — | Active |

| W1 | $5.0k | Available |

| Walker S | — | Active |

| ZERITH H1 | — | Active |

LiDAR spans 5 robot categories — from consumer to research platforms.

13

robots using LiDAR

Avg. price: $150k

A2

Agile ONE

Digit

+10 more

3

robots using LiDAR

BellaBot

PUDU FlashBot Arm

Stretch

2

robots using LiDAR

Avg. price: $70k

DRC-HUBO+

Reachy 2

2

robots using LiDAR

Avg. price: $7.5k

MiPA

W1

2

robots using LiDAR

Avg. price: $950

PowerDetect UV Reveal 2-In-1

ZERITH H1

Technologies most often paired with LiDAR across 22 robots.

Browse the full components directory or see the components glossary for detailed explanations of each technology.

5 of 22 robots with LiDAR have public pricing, ranging $949.99 – $150k. 17 robots use custom or enterprise pricing.

Lowest

$949.99

PowerDetect UV Reveal 2-In-1

Average

$47.2k

5 robots with pricing

Highest

$150k

Iron

999 other sensor technologies tracked in ui44, ranked by adoption.

40 robots · 8 also use LiDAR

16 robots · 2 also use LiDAR

15 robots · 4 also use LiDAR

12 robots

12 robots

12 robots · 1 also use LiDAR

12 robots · 1 also use LiDAR

9 robots

Browse all Sensor components or use the robot comparison tool to evaluate how different sensor configurations perform across specific robot models.

The robotics sensor market is one of the fastest-growing segments in the broader sensor industry. As robots move from controlled industrial environments into unstructured home and commercial spaces, the demands on sensor technology increase dramatically.

Multi-modal sensing

Robots combine multiple sensor types (vision, depth, tactile, inertial) to build comprehensive environmental understanding

Miniaturization

Sensors that once occupied entire circuit boards now fit into fingernail-sized packages, making advanced sensing affordable for consumer robots

Edge AI integration

AI processing directly in sensor modules enables faster perception without cloud latency

Industry Adoption Snapshot

LiDAR is adopted by 22 robots from 19 manufacturers in the ui44 database, providing a data-driven view of real-world deployment patterns.

Certifications carried by robots incorporating LiDAR, indicating compliance with safety, EMC, and quality standards.

Platform compatibility, voice integration, and AI capabilities across robots with LiDAR.

The long-form buyer, maintenance, and troubleshooting material kept available without forcing it into the main scan path.

If LiDAR is an important factor in your robot selection, here are key considerations to guide your decision.

Coverage area

Does the sensor array provide 360° awareness or only forward-facing detection?

Range

How far can the robot sense obstacles or objects?

Resolution

How detailed is the sensor data for recognition tasks?

Redundancy

Are there backup sensors if one fails?

Serviceability

Are sensors user-serviceable or require manufacturer maintenance?

A component is only as good as its integration. Check how the manufacturer has incorporated LiDAR into the overall robot design and software stack.

Review what other sensor technologies are paired with LiDAR in each robot — see the related components section.

Make sure the robot's category matches your use case. LiDAR serves different roles in different robot types.

Consider the manufacturer's reputation for software updates, support, and component reliability.

Compare Before You Buy

Use the ui44 comparison tool to evaluate robots with LiDAR side by side.

Sensors are among the most maintenance-sensitive components in a robot. Their performance can degrade over time due to physical wear, environmental exposure, and calibration drift. Understanding the maintenance profile of a robot's sensor suite helps set realistic expectations for long-term ownership and operation.

Sensor durability varies significantly by type. Solid-state sensors like IMUs and accelerometers have no moving parts and typically last the lifetime of the robot.

Regular sensor maintenance primarily involves keeping optical surfaces clean. Camera lenses, LiDAR windows, and infrared emitters should be wiped with a soft, lint-free cloth to remove dust and fingerprints.

When evaluating sensor technology for long-term value, consider the manufacturer's track record for software updates that improve sensor utilization. A robot with good sensors and ongoing software development can actually improve its performance over time as algorithms are refined.

For the 22 robots in the ui44 database using LiDAR, we recommend checking the individual robot pages for manufacturer-specific maintenance guidance and support documentation. Each manufacturer has different support policies, update frequencies, and warranty terms that affect the long-term ownership experience of their sensor technologies.

Sensor-related issues are among the most common problems home robot owners encounter. Many sensor issues can be resolved with simple maintenance or environmental adjustments, while others may indicate hardware problems requiring manufacturer support. Understanding common failure modes helps you diagnose and resolve issues quickly, minimizing robot downtime.

Likely Causes

Resolution

Likely Causes

Resolution

Likely Causes

Resolution

For model-specific troubleshooting, visit the individual robot pages for the 22 robots using LiDAR. Each manufacturer provides model-specific support resources and diagnostic tools for their sensor implementations.

What to do next

This page should hand you off to the next useful comparison step, not strand you at the bottom of a long detail route.

Widen the layer

Open the full sensor workbench when LiDAR is only one part of the decision and you need the broader market map.

Side-by-side check

Move from label-level research into direct robot comparison once you know which profiles are documented well enough to trust.

Adjacent signal

This is the most common neighboring component on robots that already use LiDAR, so it is the fastest next branch if you need stack context.