But a cloud-only robot brain is a bad default for the home. Homes have stairs, children, pets, glassware, private conversations, patchy Wi-Fi, closed doors, nighttime motion, and people who will not pause so a server can finish thinking. If the robot can move, see, hear, or touch, the important question is not simply "how smart is the AI?" It is where the AI decision happens.

This is why Amazon Astro remains such a useful early example. ui44 tracks Astro at $1,599.99 by invitation only, with two Qualcomm QCS605 chips, a Qualcomm SDA660, and Amazon's AZ1 Neural Edge in its AI stack. It is not a humanoid, and it will not do chores, but it shows the basic pattern: a home robot needs local perception and navigation even when it also depends on cloud services for Alexa, Ring, video, and remote monitoring.

What does on-device AI actually mean?

"On-device AI" does not mean a robot has a full frontier model trapped inside its shell. In robotics, it usually means some decisions happen locally on the robot or its dock rather than being sent to a vendor cloud first.

For buyers, split the robot brain into three layers:

Robot decision layer

Reflexes and control

- Where it should usually happen

- On the robot

- Why it matters in a home

- Stopping, balancing, avoiding a foot, and not driving off a stair need low latency.

Robot decision layer

Perception and navigation

- Where it should usually happen

- Mostly on the robot

- Why it matters in a home

- Cameras, depth sensors, LiDAR, microphones, faces, pets, and rooms are privacy-sensitive and time-sensitive.

Robot decision layer

Language, planning, memory, and search

- Where it should usually happen

- Hybrid or cloud

- Why it matters in a home

- Long conversations, broad knowledge, task planning, and fleet learning often need larger models and frequent updates.

| Robot decision layer | Where it should usually happen | Why it matters in a home |

|---|---|---|

| Reflexes and control | On the robot | Stopping, balancing, avoiding a foot, and not driving off a stair need low latency. |

| Perception and navigation | Mostly on the robot | Cameras, depth sensors, LiDAR, microphones, faces, pets, and rooms are privacy-sensitive and time-sensitive. |

| Language, planning, memory, and search | Hybrid or cloud | Long conversations, broad knowledge, task planning, and fleet learning often need larger models and frequent updates. |

The mistake is treating those layers as interchangeable. A robot can ask the cloud to plan tomorrow's cleaning route. It should not need the cloud to decide whether to stop before hitting a dog bowl.

Recent industry reporting points in the same direction. CNBC's China Connection coverage in April 2026 described AI moving from internet-only products into physical hardware, with startups and automakers building devices that run AI locally. The same reporting quoted data-sovereignty concerns from cloud infrastructure builders and noted that robotics in particular will need powerful AI processed on devices. Separately, ChinaNews described Amap's Tutu guide-dog robot as an open-environment autonomy project that has to perceive, decide, and act without preset routes or human remote control. Those are not ordinary home products yet, but the direction is clear: physical AI cannot live entirely in a distant data center.

Why cloud-only robot brains are fragile

Cloud AI is attractive because it can be cheaper to launch, easier to update, and more conversational. For a stationary speaker, that tradeoff is tolerable. For a moving robot, it creates several buyer risks.

First, latency becomes a safety issue. A robot that waits on the network for a navigation decision is not just slow; it is unpredictable. The more the robot moves around people, climbs thresholds, follows a person, or manipulates objects, the less acceptable that becomes.

Second, privacy changes. A robot with cameras and microphones sees the most personal parts of a home: bedrooms, medicine bottles, children's toys, pets, calendars, routines, and visitors. If raw audio or video leaves the home by default, buyers should know exactly what is uploaded, why, how long it is kept, and whether it is used for training.

Third, outages become product failures. If Wi-Fi drops, a vendor cloud is down, or a subscription lapses, the robot should still be able to dock, stop, avoid obstacles, preserve maps, and perform basic local tasks. If it becomes a rolling paperweight, the AI architecture is part of the risk.

Fourth, "AI" can hide remote human help. Human-in-the-loop robotics can be reasonable, especially for early humanoids, assistive systems, and teleoperated research platforms. But the buyer should know when a robot is autonomous, when a remote operator can intervene, and what video or control access that operator gets.

The practical buyer rule is simple: the closer a decision is to motion, safety, or private sensor data, the closer it should be to the robot.

What the ui44 database shows

The strongest products in the database are not pure edge robots or pure cloud robots. They are hybrids with different boundaries.

AGIBOT X2 is the obvious hardware-forward example. ui44 tracks the compact humanoid at $24,240, with the Ultra configuration listing RK3588 dual compute plus NVIDIA Orin NX 157 TOPS, 3D LiDAR, RGB-D camera, RGB cameras, Wi-Fi, Bluetooth, and optional 4G/5G. That is a lot of local compute for a 131 cm, 35-39 kg robot. It also matters because X2's useful manipulation limits are modest: 3 kg only in specific postures and 1 kg across the full arm range. When a humanoid has those physical limits, local safety and posture decisions are not a luxury.

AGIBOT A2 Ultra goes further upmarket, with a full-size 169 cm body, enterprise pricing, 3D LiDAR, RGB-D, 4G/5G, and NVIDIA Jetson Orin 64G plus a 16-core CPU in the ui44 record. That does not make it a home robot. It does show where serious embodied-AI platforms are going: sensor-rich robots with local compute first, cloud connectivity second.

The more interesting home-buyer examples are smaller. Clutterbot Rovie is a development-stage decluttering robot whose ui44 record describes computer-vision clutter detection, obstacle and stair avoidance, human and pet avoidance, and privacy-focused local processing according to the company's FAQ. That is exactly the right claim to scrutinize, because a robot that picks up toys will see floors, rooms, and family routines constantly.

Pophie, a desk-sized companion robot from InsBotics, is even more explicit about the hybrid split. ui44 tracks it at a $269 launch offer with a split edge/cloud AI architecture: on-device perception and real-time control, with cloud-based multimodal reasoning, memory, emotion modeling, and dialogue planning. That architecture makes sense for a companion: local sensing decides when it is being touched, looked at, or ignored; cloud AI can handle richer conversation if the user accepts the privacy tradeoff.

Asimov DIY Kit is a research example, not a normal consumer purchase. But its $15,000 target price and architecture are revealing: ui44 tracks onboard low-latency processing for reflex loops plus cloud-connected high-level reasoning. That is the hybrid model in one sentence. Fast physical decisions stay local; slower reasoning can be remote.

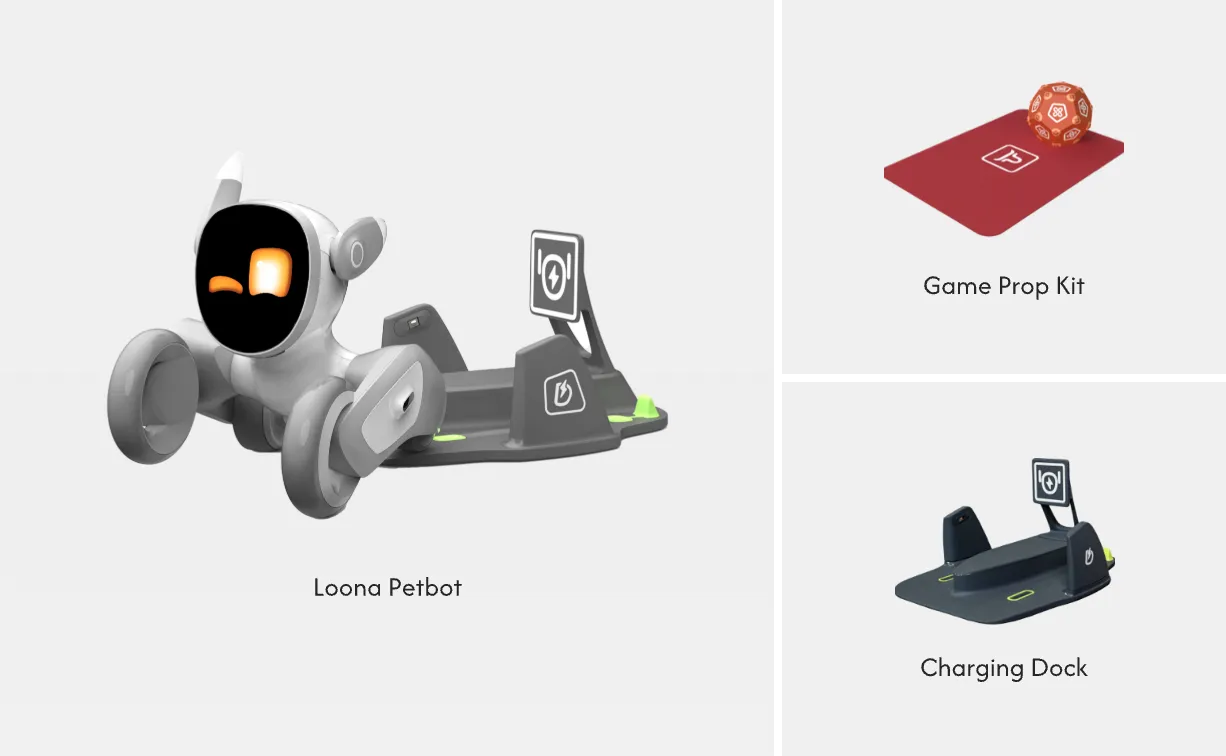

Companion robots show the same pattern at lower prices. Loona uses a quad-core Cortex-A53, a 5 TOPS BPU, 2 GB RAM, 8 GB eMMC, and ChatGPT-4o integration in the ui44 record. EBO X is a $999 family companion robot with GPT-4o mini integration plus Visual SLAM navigation. Samsung Ballie is still development-stage, but ui44 tracks Google Gemini, Samsung language models, SmartThings, autonomous navigation, a projector, pet and family monitoring, and reminders.

These robots are not all equally capable, but they expose the same buying question. Is the cloud being used for conversation and improvements, or is it being used as a crutch for basic perception and safety?

A buyer checklist for local versus cloud AI

- What still works offline? The robot should at least stop safely, dock,

- What sensor data leaves the home? Ask specifically about raw video, raw

- Where are safety decisions made? Obstacle avoidance, fall prevention,

- Is remote operation ever used? If yes, when, by whom, with what consent,

- Can memories and maps be deleted? A companion robot's long-term memory is

- What happens if the vendor cloud disappears? This matters for pre-order

When cloud AI is the right tool

None of this means cloud AI is bad. For many home robots, cloud models are the only practical way to offer natural conversation, broad knowledge, translation, image understanding, skill downloads, and long-term product improvement.

Cloud AI is especially reasonable for tasks that are not urgent: summarizing a patrol, helping write a reminder, answering a question, generating a story, searching for a recipe, or suggesting how to organize a schedule. It is also useful for fleet learning, where a manufacturer improves models across many robots after filtering and anonymizing data.

The line is physical consequence. If a model hallucinates a dinner suggestion, that is annoying. If a robot hallucinates that a shiny staircase is a safe path, that is different. Home robots need local guardrails precisely because cloud models can be impressive and still be wrong.

This is why the most credible near-term architecture is not "all local" or "all cloud." It is local-first hybrid AI:

- local perception for rooms, people, pets, obstacles, stairs, and docking;

- local control for motors, balance, braking, grasp release, and speed limits;

- local privacy filters before audio, video, maps, or faces leave the home;

- cloud reasoning for non-urgent language, search, planning, and updates;

- transparent human assistance only when the user understands and consents.

For future humanoids such as 1X NEO, ui44 tracks a $20,000 pre-order price, Wi-Fi/Bluetooth, cameras, depth sensors, tactile skin, a microphone array, and household-chore ambitions. For Zeroth Robotics M1, ui44 tracks a $2,899 pre-order companion robot with whole-home mapping, visual recognition, obstacle avoidance, posture tracking, multilingual conversation, open programming, VR integration, and reinforcement-learning tool support. These are exactly the kinds of products where buyers should separate AI demos from operating design: what is local, what is cloud, and what is still human-assisted?

The verdict

Home robots do not need every AI capability on-device. They do need enough on-device intelligence to be safe, private, and useful when the network is slow, unavailable, or untrusted.

A good rule of thumb: the more a robot can see, hear, move, remember, or touch, the more you should care about local AI. Cloud intelligence can make a robot more capable. It should not be the only thing standing between a household and a bad physical decision.

If you are comparing robots, start with the product page specs, then use /compare and the /categories pages to separate conversation features from autonomy, sensors, connectivity, and manipulation. For home robots, the best question is no longer "does it have AI?" It is "which parts of the AI stay home?"

Database context

Use this article as a privacy verification workflow

Turn the article into a real verification pass

Do Home Robots Need On-Device AI? already points you toward 12 linked robots, 11 manufacturers, and 4 countries inside the ui44 database. That matters because strong buyer guidance is easier to apply when you can move immediately from a claim or warning into concrete product pages, manufacturer directories, component explainers, and country-level context instead of treating the article as an isolated opinion piece. The fastest next step is to turn the article into a shortlist workflow: open the linked robot pages, verify which specs are actually published for those models, then compare the surrounding manufacturer and component context before you decide whether the underlying claim changes your buying plan.

For this topic, the useful discipline is to separate the editorial lesson from the catalog evidence. The article gives you the framing, but the robot pages tell you what each product actually ships with today: sensor stack, connectivity methods, listed price, release timing, category, and support-relevant compatibility notes. The manufacturer pages then show whether you are looking at a one-off launch, a broader lineup pattern, or a company that spans multiple categories. That layered workflow reduces the risk of buying on a single marketing phrase or a single support FAQ.

Use the robot pages to confirm which products actually expose cameras, microphones, Wi-Fi, or voice systems, then use the manufacturer pages to decide how much of the privacy question seems product-specific versus brand-wide. On this route cluster, Astro, X2, and A2 Ultra form the fastest reality check. If you want a quick working shortlist, open Compare Astro, X2, and A2 Ultra next, then keep this article open as the reasoning layer while you compare structured data side by side.

Practical Takeaway

Every robot, manufacturer, category, component, and country reference below resolves to a real ui44 page, keeping the follow-up path grounded in database records rather than generic advice.

Suggested next steps in ui44

- Open Astro and note the listed sensors, connectivity methods, and voice stack before you interpret any policy claim.

- Cross-check the wider brand context on Amazon so you can see whether the privacy question touches one model or a broader lineup.

- Use the linked component pages to confirm how common the relevant sensors and connectivity layers are across the database.

- Keep a short note of which policy layers you checked, which device features are actually present on the robot page, and which items still depend on region- or app-level confirmation.

- Finish with Compare Astro, X2, and A2 Ultra so the policy reading sits next to structured product data.

Database context

Robot profiles worth opening next

Use the linked product pages as the evidence layer

The linked robot pages are where this article becomes operational. Instead of asking whether the headline is interesting, use the robot entries to inspect the actual mix of sensors, connectivity options, batteries, pricing, release timing, and stated capabilities attached to the products mentioned in the article. That is the easiest way to see whether the warning or opportunity described here affects one product family, a specific design pattern, or an entire buying lane.

Astro is tracked on ui44 as a active security & patrol robot from Amazon. The database currently records a listed price of $1,599, a release date of 2021, Not officially disclosed battery life, Not officially disclosed charging time, and a published stack that includes 5MP Bezel Camera, 1080p Periscope Camera (132° FOV), and Infrared Vision plus Wi-Fi 802.11ac and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Astro combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Autonomous Home Patrol, Visual ID (face recognition), and Remote Home Monitoring with any cloud, app, or voice layers, including Amazon Alexa.

X2 is tracked on ui44 as a available humanoid robot from AGIBOT. The database currently records a listed price of $24,240, a release date of 2025, ~2 hours at 0.5 m/s walking battery life, ~1.5 hours charging time, and a published stack that includes 3D LiDAR (Ultra), RGB-D Camera (Ultra), and RGB Cameras plus Wi-Fi and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether X2 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Bipedal Walking, 25-30 DOF Articulation, and Object Manipulation (with OmniHand accessory) with any cloud, app, or voice layers.

A2 Ultra is tracked on ui44 as a available humanoid robot from AGIBOT. The database currently records a listed price of Price TBA, a release date of 2024, Standing: 3h, Walking: 1.5h+ battery life, 2 hours charging time, and a published stack that includes 3D LiDAR, RGB-D Camera, and RGB Camera plus Wi-Fi and 4G/5G.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether A2 Ultra combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Bipedal Walking, Autonomous Navigation, and Intelligent Obstacle Avoidance with any cloud, app, or voice layers.

Rovie

Clutterbot · Cleaning · Development

Rovie is tracked on ui44 as a development cleaning robot from Clutterbot. The database currently records a listed price of Price TBA, a release date of 2026-01, Not officially disclosed battery life, Not officially disclosed charging time, and a published stack that includes Computer Vision, Built-in sensors for obstacle and stair detection, and Human and pet recognition sensors plus its listed connectivity stack.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Rovie combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Toy and clutter detection, Autonomous floor decluttering, and Scoop-based item pickup with any cloud, app, or voice layers.

Pophie is tracked on ui44 as a development companions robot from InsBotics. The database currently records a listed price of $269, a release date of 2026-01, Not officially disclosed battery life, Not officially disclosed charging time, and a published stack that includes Wide-angle rotating camera, Dual microphone array with sound direction detection, and Multi-zone touch sensors plus Wi-Fi.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Pophie combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Proactive interaction without wake word, Face tracking and gaze-following, and Multi-person conversation awareness with any cloud, app, or voice layers.

Database context

Manufacturer context behind the article

Check whether this is one product story or a broader company pattern

Manufacturer pages add the privacy context that individual product pages cannot show on their own. They help you check whether cameras, microphones, cloud accounts, app controls, and policy assumptions appear across a broader lineup or stay tied to one specific product story.

Amazon

ui44 currently tracks 1 robot from Amazon across 1 category. The company is grouped under USA, and the current catalog footprint on ui44 includes Astro.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Security & Patrol as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

AGIBOT

ui44 currently tracks 7 robots from AGIBOT across 3 categorys. The company is grouped under China, and the current catalog footprint on ui44 includes A2 Ultra, X2, Expedition A3.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid, Quadruped, Commercial as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Clutterbot

ui44 currently tracks 1 robot from Clutterbot across 1 category. The current catalog footprint on ui44 includes Rovie.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Cleaning as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

InsBotics

ui44 currently tracks 1 robot from InsBotics across 1 category. The current catalog footprint on ui44 includes Pophie.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Companions as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Database context

Broaden the scan without leaving the database

Categories, components, and countries add the wider context

Category framing

Category pages are useful when the article touches a buying pattern that shows up across brands. A category route helps you confirm whether the linked products sit in a narrow niche or whether the same question should be tested across a larger field of alternatives.

Security & Patrol

The Security & Patrol category page currently groups 3 tracked robots from 3 manufacturers. ui44 describes this lane as: Surveillance and patrol robots that monitor homes, businesses, and perimeters autonomously.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include Astro, Vision 60, Watchbot 2.

Humanoid

The Humanoid category page currently groups 68 tracked robots from 49 manufacturers. ui44 describes this lane as: Full-size bipedal humanoid robots designed to work alongside humans. From factory floors to household tasks, these machines represent the cutting edge of robotics.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include NEO, EVE, Mornine M1.

Country and ecosystem context

Country pages give extra context when support practices, launch sequencing, regulatory posture, or manufacturer mix matter. They are not a substitute for model-level verification, but they do help you see which ecosystems cluster together and which manufacturers sit in the same regional field when you broaden the search beyond the article headline.

USA

The USA route currently groups 16 tracked robots from 12 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Boston Dynamics, Figure AI, Tesla make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

China

The China route currently groups 49 tracked robots from 14 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like AGIBOT, Roborock, Unitree Robotics make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

South Korea

The South Korea route currently groups 2 tracked robots from 1 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Samsung make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

Database context

Questions to answer before you move from reading to buying

A follow-up FAQ built from the entities already linked in this article

Frequently Asked Questions

Which page should I open first after reading “Do Home Robots Need On-Device AI?”?

Start with Astro. That gives you a concrete product anchor for the article’s main claim. From there, branch into the manufacturer and component pages so you can tell whether the article is describing one specific model, a repeated brand pattern, or a wider technology issue that affects multiple shortlist options.

How do the manufacturer pages change the buying decision?

Amazon help you zoom out from one article and one product. On ui44 they show lineup breadth, category spread, and the neighboring robots tied to the same company. That context is useful when you are deciding whether a risk belongs to a single model, whether it shows up across a brand’s portfolio, and whether you should keep looking at alternatives before committing.

When should I switch from reading to side-by-side comparison?

Move into Compare Astro, X2, and A2 Ultra as soon as you understand the article’s main warning or promise. The article explains what to watch for, but the compare view is where you can check whether price, status, battery life, connectivity, sensors, and category fit still make the robot a good match for your own home and budget.

Database context

Where to go next in ui44

Keep the research chain inside the database

If you want to keep going, these follow-on pages give you the cleanest expansion path from article to research session. Open the comparison route first if you are deciding between products today. Open the manufacturer, category, and component routes if you still need to understand the broader pattern behind the claim.

Written by

ui44 Team

Published May 4, 2026

Share this article

Open a plain share link on X or Bluesky. No embeds, no widgets, no cookie baggage.