That is not safe enough for home-robot buyers.

AGIBOT’s 2026 Partner Conference pushed this language into the spotlight. The company declared 2026 a deployment year for embodied AI, announced a broad model stack, and its official launch materials describe the D2 Max quadruped as an all-terrain Level 3 autonomous robot. Independent coverage also framed AGIBOT’s roadmap around an L1-L5 capability ladder. The useful takeaway is not that L3 has become an industry standard. It has not. The useful takeaway is that buyers need a better way to translate autonomy claims into evidence.

A robot can be autonomous in one factory station and helpless in your kitchen. A robot can walk beautifully and still need a remote human to recover from a sock on the floor. A robot can use a vision-language-action model and still fail the chore you actually care about.

This guide is a buyer-facing translation layer: what “L3” might mean, what it does not prove, and what evidence to ask for before trusting any humanoid or home robot autonomy level.

The most important rule: autonomy is scoped

Autonomy is not a single property. It is always scoped to a task, place, object set, and failure policy.

“Autonomous navigation” might mean a robot can drive down a mapped hallway. It does not mean it can pick up laundry, open a warped cabinet, decide where the spatula belongs, or safely work around a toddler. “Autonomous manipulation” might mean a robot can load a tablet into a test fixture every 20 seconds. It does not mean it can unload your dishwasher.

For home robots, the scope matters more than the level number. A useful autonomy claim should answer four questions:

- What exact task does the robot complete? A workflow, not a vibe.

- Where was it tested? Lab, factory, showroom, model apartment, or real homes.

- How often did a human intervene? Teleoperation, supervisor approval, or emergency recovery.

- What happens when it fails? Retry, stop, ask for help, damage property, or need a technician.

Without those answers, “L3” is marketing shorthand. With those answers, it can become useful.

A practical autonomy ladder for robot buyers

The robotics industry does not yet have one independent, universally accepted SAE-style autonomy ladder for home humanoids. So ui44 uses a simpler buyer framework.

Buyer-readable level

Remote control / teleoperation

- What it usually means

- A person pilots or corrects the robot directly

- What to ask for

- Operator ratio, latency, privacy controls, who can connect, and when

Buyer-readable level

Assisted skill autonomy

- What it usually means

- The robot can run a known skill after setup

- What to ask for

- Success rate per skill, number of test attempts, and reset conditions

Buyer-readable level

Scenario autonomy

- What it usually means

- The robot completes a bounded workflow in a known environment

- What to ask for

- Continuous hours, intervention rate, uptime, and failure recovery

Buyer-readable level

General household autonomy

- What it usually means

- The robot handles varied chores in ordinary homes

- What to ask for

- Multi-home data, novel-object success, safety incidents, and support cost

| Buyer-readable level | What it usually means | What to ask for |

|---|---|---|

| Remote control / teleoperation | A person pilots or corrects the robot directly | Operator ratio, latency, privacy controls, who can connect, and when |

| Assisted skill autonomy | The robot can run a known skill after setup | Success rate per skill, number of test attempts, and reset conditions |

| Scenario autonomy | The robot completes a bounded workflow in a known environment | Continuous hours, intervention rate, uptime, and failure recovery |

| General household autonomy | The robot handles varied chores in ordinary homes | Multi-home data, novel-object success, safety incidents, and support cost |

Most impressive 2026 humanoid claims live in the middle of that table. They are not fake. They are also not general home autonomy.

AGIBOT’s Longcheer deployment is a good example. In AGIBOT’s own English release, the company lists a roughly 19-20 second cycle time, throughput up to 310 units per hour, success above 99%, about 3,000 units per shift, support for 24/7 operation with minimal human intervention, over 140 hours of cumulative continuous operation, and downtime below 4%. The same release also quotes Longcheer as saying the broader path from innovation to mass production took four months, so the 36-hour figure should be read as a line-integration or commissioning metric, not “from zero to factory deployment in a day and a half.”

Those are exactly the kind of deployment figures buyers should want, but the setting matters. A tablet production line is structured, measured, and repeatable. It is the right place to prove scenario autonomy. It is not proof that the same robot can tidy an apartment, fold towels, or adapt to every family routine.

AGIBOT shows why “deployment-ready” is not the same as “home-ready”

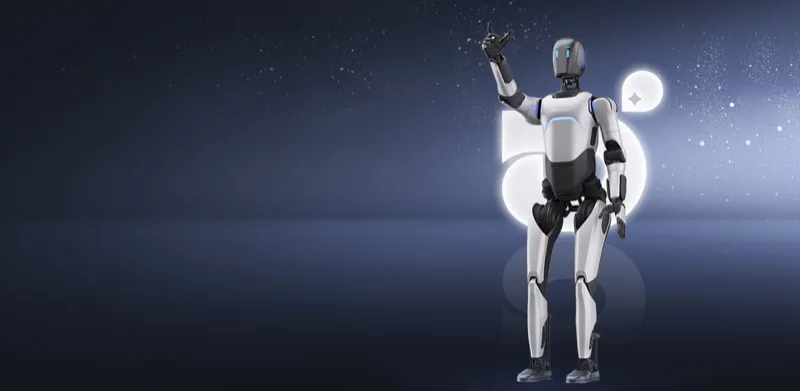

AGIBOT is one of the stronger companies to study because its claims are tied to actual deployment language, not only stage demos. In the ui44 database, AGIBOT A2 Ultra is an available enterprise humanoid: 169 cm, 69 kg, walking runtime of 1.5 hours+, standing runtime around 3 hours, 3D LiDAR, RGB-D and RGB cameras, fisheye camera, array microphone, NVIDIA Jetson Orin 64G plus a 16-core CPU, 6-DOF hands, and CR / CE-MD / CE-RED / FCC certifications.

That is a serious platform. It is also a platform whose database-supported use cases are commercial: navigation, obstacle avoidance, exhibition guidance, swarm-control displays, and dexterous manipulation. The specs help a buyer judge hardware maturity. They do not automatically prove household independence.

AGIBOT’s newer fleet makes the distinction even clearer. AGIBOT G2 is a wheeled humanoid for manufacturing, logistics, and guided-service work. Its ui44 entry tracks force-controlled arms, 360-degree surround perception, collision detection, autonomous navigation, autonomous charging, and 24/7 operation through dual hot-swappable batteries. G2 Air is smaller and more explicit about human-in-the-loop operation: a 7-DOF arm, 3 kg payload, 750-800 mm reach, sub-800 mm width, at least 1.5 m/s speed, zero-radius turning, and real-time data capture during task execution.

That last phrase is important. “Human-in-the-loop” is not a weakness if the company says it plainly. It tells buyers the robot may be valuable today because it can perform work while also collecting the data needed to become more autonomous later.

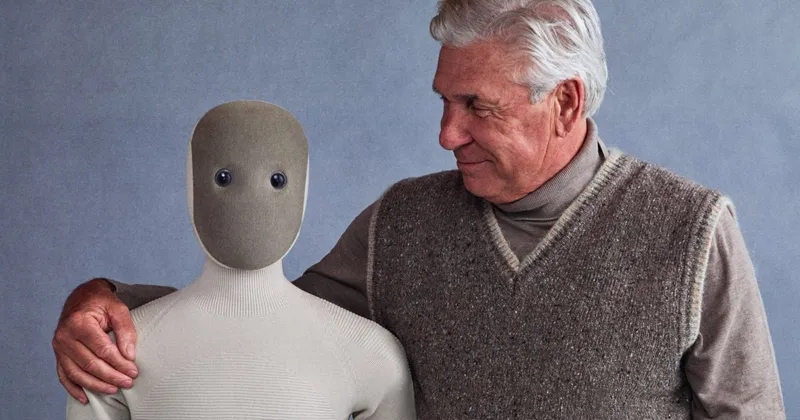

1X NEO is the home test case: autonomy plus remote help

1X NEO is closer to the home buyer’s actual question. It is a $20,000 preorder humanoid, 167 cm tall, 30 kg, with roughly 4 hours of battery life, RGB/depth/tactile/microphone sensing, a soft body, and the 1X app. The company’s order page also makes the autonomy boundary visible: NEO arrives with basic autonomy for early owners, learns and repeats tasks through Redwood AI, and uses scheduled “Expert Mode” for complex tasks the robot does not yet know.

That is a better disclosure than pretending the robot is a finished autonomous housekeeper. It tells a buyer that the product experience may blend three modes:

- the robot doing basic tasks by itself;

- the owner scheduling or guiding chores through the app or voice;

- a remote 1X expert supervising complex actions at scheduled times.

For some buyers, that hybrid model may be acceptable. For others, it is the main reason to wait. The difference depends on privacy expectations, support cost, and how much of the robot’s value comes from a human operator behind the scenes.

The key question is not “Is NEO L3?” The better question is: which NEO chores run without a human, in what kind of home, with what success rate, and how much remote help per week?

Unitree G1 proves price is not autonomy

Unitree G1 is one of the most visible affordable humanoids because it starts at $13,500 and is actually available, not only teased. The ui44 database lists a compact 132 cm, 35 kg robot with about 2 hours of battery life, a depth camera, 3D LiDAR, four-microphone array, Wi-Fi 6, Bluetooth 5.2, optional NVIDIA Jetson Orin compute for the EDU version, and optional Dex3-1 three-fingered hands.

That is remarkable hardware for the price. It is still best read as a research and development platform. Unitree’s own public spec page warns that the humanoid robot industry is in the early stages and that individual users should understand limitations before purchase.

This is where buyers often get fooled. A low price plus a humanoid body does not equal autonomy. A G1 may be a great platform for developers, universities, and advanced hobbyists. It should not be compared to a finished appliance. If the claim is household capability, ask for household evidence, not just walking, balancing, dancing, or SDK support.

The least hyped robots often make the autonomy boundary clearest

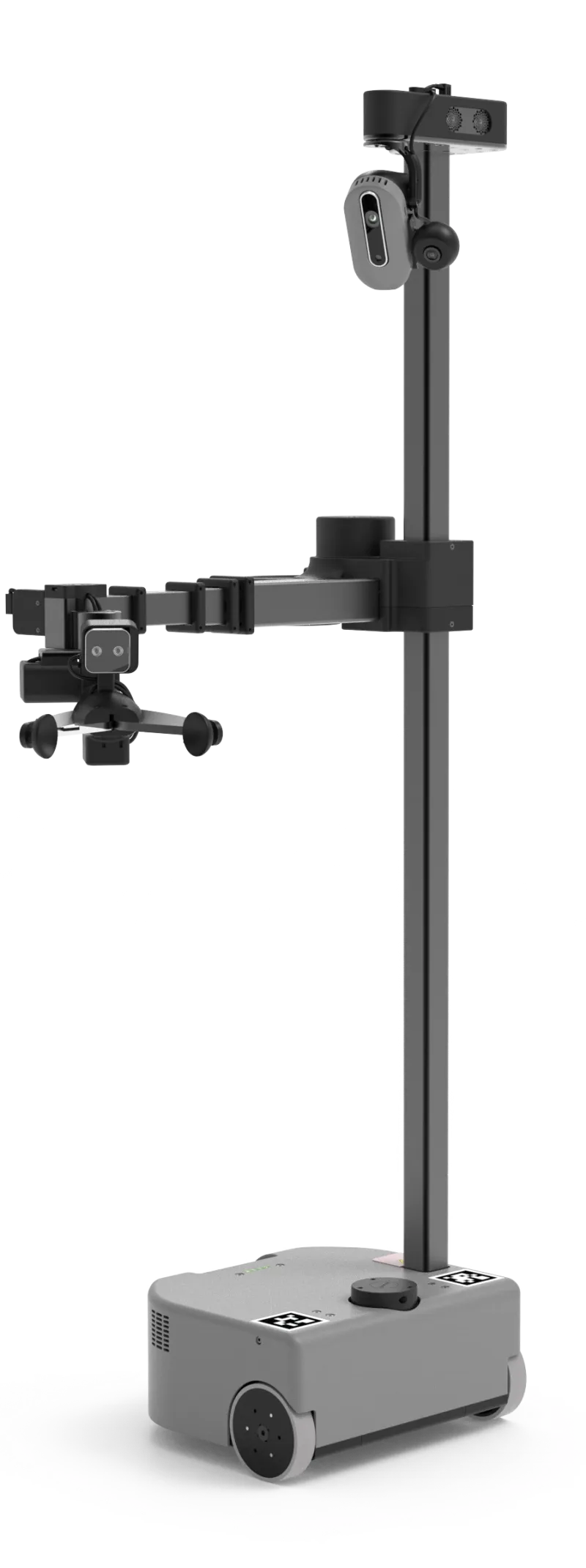

Hello Robot’s Stretch 3 is not a humanoid and does not look like the future from a movie trailer. That is partly why it is useful for buyers. It is a $24,950 mobile manipulator, 141 cm tall, 24.5 kg, with a compact 33 × 34 cm footprint, 2-5 hour battery life, head and gripper RGB-D cameras, a navigation laser, microphone array, ROS 2, Python SDK, and web/gamepad/dexterous teleoperation options.

Stretch is honest about being a platform for assistive care, research, and mobile manipulation. It can navigate, reach, grasp, and be teleoperated. It is not sold as a magic general-purpose servant.

That kind of clarity is valuable. A narrowly scoped robot with a published SDK, known payload, known sensors, and clear teleoperation path can be easier to trust than a more exciting humanoid with vague autonomy language.

VLA models are progress, not a guarantee

Vision-language-action models are one reason autonomy claims are getting more ambitious. Figure’s Helix announcement, for example, describes an onboard VLA system that connects perception, language, and continuous upper-body control. The company says Helix uses a slower vision-language component for scene and command understanding and a faster visuomotor policy for 200 Hz action output. It also says the system was trained on roughly 500 hours of teleoperated behavior and can generalize to many small household objects.

That is meaningful progress. It is also not the same as an autonomy level for a consumer product. A model architecture tells you how the robot may learn and control its body. A buyer still needs the operational evidence: task list, success rate, deployment hours, intervention rate, safety behavior, and support model.

This distinction matters because AI language can outrun product reality. “VLA,” “embodied foundation model,” “world model,” and “generalist control” are useful technical concepts. They are not substitutes for “the robot did this chore 500 times in 50 real homes with fewer than X interventions.”

What should buyers ask before trusting an autonomy level?

Before treating any L2, L3, or “fully autonomous” robot claim as buyer-relevant, ask these five evidence questions.

1. What is the exact task boundary?

“Laundry” is not a task. Sorting whites, picking a towel from the floor, loading a washer, adding detergent, moving wet clothes, folding shirts, and putting them away are different tasks. Ask which subtask the robot performs autonomously.

2. What is the environment boundary?

A factory station, hotel corridor, demo apartment, and lived-in family home are not comparable. For home robots, ask whether the data comes from real homes with clutter, pets, variable lighting, stairs, narrow spaces, and objects that were not staged for the demo.

3. What is the intervention rate?

This is the most important missing number. A robot that succeeds 99% of the time with a human watching every second is different from a robot that runs for hours with one remote operator supervising 20 units. Ask for interventions per hour, per task, or per completed workflow.

4. What counts as a failure?

Does the company count a task as successful if the robot drops an object and a human resets it? Does a remote operator correction count as success? Are aborted attempts included? Strong claims define the denominator.

5. What is the privacy and safety model?

Home autonomy is not just robotics performance. It is cameras, microphones, remote access, logs, maps, and liability. If remote experts can connect, ask who they are, when they can connect, what the owner sees, what data is stored, and how emergency stop / safe-state behavior works.

A quick buyer scorecard from the ui44 database

Robot

- What the database supports

- Available enterprise humanoid; 169 cm, 69 kg, 3D LiDAR/RGB-D/fisheye sensing, Jetson Orin 64G + 16-core CPU, 6-DOF hands, major certifications

- Buyer read on autonomy

- Serious commercial platform; do not assume home autonomy from enterprise specs

Robot

- What the database supports

- Wheeled industrial humanoid with force-controlled arms, 360° perception, autonomous charging, hot-swappable batteries

- Buyer read on autonomy

- Stronger scenario-autonomy story for factories/logistics than for homes

Robot

- What the database supports

- Compact single-arm manipulator; 7-DOF arm, 3 kg payload, 750-800 mm reach, human-in-the-loop data capture

- Buyer read on autonomy

- Explicitly transitional: useful assisted operation now, autonomy later

Robot

- What the database supports

- $20,000 home preorder; 167 cm, 30 kg, ~4h battery, soft body, app, household focus

- Buyer read on autonomy

- Most home-relevant, but early owners should expect a hybrid of autonomy and scheduled expert help

Robot

- What the database supports

- $13,500 available humanoid; 132 cm, 35 kg, ~2h battery, LiDAR/depth camera, optional hands

- Buyer read on autonomy

- Affordable developer platform; price should not be mistaken for chore autonomy

Robot

- What the database supports

- $24,950 mobile manipulator; ROS 2/Python, compact footprint, RGB-D cameras, LiDAR, teleoperation

- Buyer read on autonomy

- Clearer scoped capability than many humanoids; not a turnkey servant

| Robot | What the database supports | Buyer read on autonomy |

|---|---|---|

| AGIBOT A2 Ultra | Available enterprise humanoid; 169 cm, 69 kg, 3D LiDAR/RGB-D/fisheye sensing, Jetson Orin 64G + 16-core CPU, 6-DOF hands, major certifications | Serious commercial platform; do not assume home autonomy from enterprise specs |

| AGIBOT G2 | Wheeled industrial humanoid with force-controlled arms, 360° perception, autonomous charging, hot-swappable batteries | Stronger scenario-autonomy story for factories/logistics than for homes |

| AGIBOT G2 Air | Compact single-arm manipulator; 7-DOF arm, 3 kg payload, 750-800 mm reach, human-in-the-loop data capture | Explicitly transitional: useful assisted operation now, autonomy later |

| 1X NEO | $20,000 home preorder; 167 cm, 30 kg, ~4h battery, soft body, app, household focus | Most home-relevant, but early owners should expect a hybrid of autonomy and scheduled expert help |

| Unitree G1 | $13,500 available humanoid; 132 cm, 35 kg, ~2h battery, LiDAR/depth camera, optional hands | Affordable developer platform; price should not be mistaken for chore autonomy |

| Hello Robot Stretch 3 | $24,950 mobile manipulator; ROS 2/Python, compact footprint, RGB-D cameras, LiDAR, teleoperation | Clearer scoped capability than many humanoids; not a turnkey servant |

What an honest “L3 home robot” claim would look like

An honest home-robot autonomy claim would be boringly specific. For example:

In 30 ordinary apartments, the robot cleared dinner plates from a table to a

dishwasher-safe staging area for 21 days. It completed 93% of attempts without

remote control, averaged 2.4 human interventions per week, never entered

bedrooms unless allowed, and stopped safely on 100% of pet/human proximity

tests.

That is the kind of claim a buyer can evaluate. It includes the task, the homes, the duration, the intervention rate, the privacy boundary, and the safety result.

Until companies publish evidence like that, treat “L3” as a starting point for questions rather than a buying guarantee.

Bottom line

L3 humanoid autonomy should not mean “the robot is basically independent.” For 2026 buyers, the safest translation is narrower: the robot may handle a bounded workflow with meaningful autonomy inside a tested environment, while still needing human supervision, setup, or recovery outside that scope.

That is still progress. AGIBOT’s official factory metrics, 1X’s home-focused hybrid model, Unitree’s cheaper research hardware, Hello Robot’s scoped manipulation platform, and Figure’s VLA work all point toward more capable home robots. But the category is not ready for blind trust.

If you are comparing robots, start with the ui44 database facts: price, status, sensors, runtime, payload, software stack, and supported use cases. Then ask the autonomy questions. A real home robot is not the one with the highest claimed level. It is the one whose evidence matches the job you actually want done.

Database context

Use this article as a privacy verification workflow

Turn the article into a real verification pass

L3 Humanoid Autonomy: Buyer’s Guide already points you toward 6 linked robots, 4 manufacturers, and 3 countries inside the ui44 database. That matters because strong buyer guidance is easier to apply when you can move immediately from a claim or warning into concrete product pages, manufacturer directories, component explainers, and country-level context instead of treating the article as an isolated opinion piece. The fastest next step is to turn the article into a shortlist workflow: open the linked robot pages, verify which specs are actually published for those models, then compare the surrounding manufacturer and component context before you decide whether the underlying claim changes your buying plan.

For this topic, the useful discipline is to separate the editorial lesson from the catalog evidence. The article gives you the framing, but the robot pages tell you what each product actually ships with today: sensor stack, connectivity methods, listed price, release timing, category, and support-relevant compatibility notes. The manufacturer pages then show whether you are looking at a one-off launch, a broader lineup pattern, or a company that spans multiple categories. That layered workflow reduces the risk of buying on a single marketing phrase or a single support FAQ.

Use the robot pages to confirm which products actually expose cameras, microphones, Wi-Fi, or voice systems, then use the manufacturer pages to decide how much of the privacy question seems product-specific versus brand-wide. On this route cluster, A2 Ultra, G2, and G2 Air form the fastest reality check. If you want a quick working shortlist, open Compare A2 Ultra, G2, and G2 Air next, then keep this article open as the reasoning layer while you compare structured data side by side.

Practical Takeaway

Every robot, manufacturer, category, component, and country reference below resolves to a real ui44 page, keeping the follow-up path grounded in database records rather than generic advice.

Suggested next steps in ui44

- Open A2 Ultra and note the listed sensors, connectivity methods, and voice stack before you interpret any policy claim.

- Cross-check the wider brand context on AGIBOT so you can see whether the privacy question touches one model or a broader lineup.

- Use the linked component pages to confirm how common the relevant sensors and connectivity layers are across the database.

- Keep a short note of which policy layers you checked, which device features are actually present on the robot page, and which items still depend on region- or app-level confirmation.

- Finish with Compare A2 Ultra, G2, and G2 Air so the policy reading sits next to structured product data.

Database context

Robot profiles worth opening next

Use the linked product pages as the evidence layer

The linked robot pages are where this article becomes operational. Instead of asking whether the headline is interesting, use the robot entries to inspect the actual mix of sensors, connectivity options, batteries, pricing, release timing, and stated capabilities attached to the products mentioned in the article. That is the easiest way to see whether the warning or opportunity described here affects one product family, a specific design pattern, or an entire buying lane.

A2 Ultra is tracked on ui44 as a available humanoid robot from AGIBOT. The database currently records a listed price of Price TBA, a release date of 2024, Standing: 3h, Walking: 1.5h+ battery life, 2 hours charging time, and a published stack that includes 3D LiDAR, RGB-D Camera, and RGB Camera plus Wi-Fi and 4G/5G.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether A2 Ultra combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Bipedal Walking, Autonomous Navigation, and Intelligent Obstacle Avoidance with any cloud, app, or voice layers.

G2 is tracked on ui44 as a active humanoid robot from AGIBOT. The database currently records a listed price of Price TBA, a release date of 2025-10, 24/7 operation via dual hot-swappable batteries battery life, Autonomous charging supported charging time, and a published stack that includes Multimodal spatial perception system, 360° surround-view sensing, and Collision detection sensors plus its listed connectivity stack.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether G2 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Omnidirectional wheeled mobility, Force-controlled dual-arm manipulation, and Submillimeter-precision task execution with any cloud, app, or voice layers.

G2 Air is tracked on ui44 as a development commercial robot from AGIBOT. The database currently records a listed price of Price TBA, a release date of 2026-04, Not officially disclosed battery life, Not officially disclosed charging time, and a published stack that includes Not officially disclosed plus Not officially disclosed.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether G2 Air combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Single-Arm Mobile Manipulation, 7-DOF Arm, and Human-in-the-Loop Operation with any cloud, app, or voice layers.

NEO

1X Technologies · Humanoid · Pre-order

NEO is tracked on ui44 as a pre-order humanoid robot from 1X Technologies. The database currently records a listed price of $20,000, a release date of 2025-10-28, ~4 hours battery life, Not disclosed charging time, and a published stack that includes RGB Cameras, Depth Sensors, and Tactile Skin plus Wi-Fi and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether NEO combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Household Chores, Tidying Up, and Safe Human Interaction with any cloud, app, or voice layers.

G1 is tracked on ui44 as a available humanoid robot from Unitree. The database currently records a listed price of $13,500, a release date of 2024, ~2 hours battery life, Not disclosed charging time, and a published stack that includes Depth Camera, 3D LiDAR, and 4 Microphone Array plus Wi-Fi 6 and Bluetooth 5.2.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether G1 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Bipedal Walking, Object Manipulation, and Dexterous Hands (optional Dex3-1) with any cloud, app, or voice layers.

Database context

Manufacturer context behind the article

Check whether this is one product story or a broader company pattern

Manufacturer pages add the privacy context that individual product pages cannot show on their own. They help you check whether cameras, microphones, cloud accounts, app controls, and policy assumptions appear across a broader lineup or stay tied to one specific product story.

AGIBOT

ui44 currently tracks 7 robots from AGIBOT across 3 categorys. The company is grouped under China, and the current catalog footprint on ui44 includes A2 Ultra, X2, Expedition A3.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid, Quadruped, Commercial as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

1X Technologies

ui44 currently tracks 2 robots from 1X Technologies across 1 category. The company is grouped under Norway, and the current catalog footprint on ui44 includes NEO, EVE.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Unitree

ui44 currently tracks 2 robots from Unitree across 1 category. The company is grouped under China, and the current catalog footprint on ui44 includes H1, G1.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Hello Robot

ui44 currently tracks 1 robot from Hello Robot across 1 category. The company is grouped under USA, and the current catalog footprint on ui44 includes Stretch 3.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Home Assistants as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Database context

Broaden the scan without leaving the database

Categories, components, and countries add the wider context

Category framing

Category pages are useful when the article touches a buying pattern that shows up across brands. A category route helps you confirm whether the linked products sit in a narrow niche or whether the same question should be tested across a larger field of alternatives.

Humanoid

The Humanoid category page currently groups 73 tracked robots from 52 manufacturers. ui44 describes this lane as: Full-size bipedal humanoid robots designed to work alongside humans. From factory floors to household tasks, these machines represent the cutting edge of robotics.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include NEO, EVE, Mornine M1.

Commercial

The Commercial category page currently groups 26 tracked robots from 21 manufacturers. ui44 describes this lane as: Delivery robots, warehouse automation, hospitality service bots, and other robots built for business operations.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include G2 Air, aeo, Pepper.

Country and ecosystem context

Country pages give extra context when support practices, launch sequencing, regulatory posture, or manufacturer mix matter. They are not a substitute for model-level verification, but they do help you see which ecosystems cluster together and which manufacturers sit in the same regional field when you broaden the search beyond the article headline.

China

The China route currently groups 51 tracked robots from 15 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like AGIBOT, Unitree Robotics, Roborock make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

Norway

The Norway route currently groups 2 tracked robots from 1 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like 1X Technologies make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

USA

The USA route currently groups 17 tracked robots from 12 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Boston Dynamics, Figure AI, Richtech Robotics make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

Database context

Questions to answer before you move from reading to buying

A follow-up FAQ built from the entities already linked in this article

Frequently Asked Questions

Which page should I open first after reading “L3 Humanoid Autonomy: Buyer’s Guide”?

Start with A2 Ultra. That gives you a concrete product anchor for the article’s main claim. From there, branch into the manufacturer and component pages so you can tell whether the article is describing one specific model, a repeated brand pattern, or a wider technology issue that affects multiple shortlist options.

How do the manufacturer pages change the buying decision?

AGIBOT help you zoom out from one article and one product. On ui44 they show lineup breadth, category spread, and the neighboring robots tied to the same company. That context is useful when you are deciding whether a risk belongs to a single model, whether it shows up across a brand’s portfolio, and whether you should keep looking at alternatives before committing.

When should I switch from reading to side-by-side comparison?

Move into Compare A2 Ultra, G2, and G2 Air as soon as you understand the article’s main warning or promise. The article explains what to watch for, but the compare view is where you can check whether price, status, battery life, connectivity, sensors, and category fit still make the robot a good match for your own home and budget.

Database context

Where to go next in ui44

Keep the research chain inside the database

If you want to keep going, these follow-on pages give you the cleanest expansion path from article to research session. Open the comparison route first if you are deciding between products today. Open the manufacturer, category, and component routes if you still need to understand the broader pattern behind the claim.

Written by

ui44 Team

Published May 7, 2026

Share this article

Open a plain share link on X or Bluesky. No embeds, no widgets, no cookie baggage.