That is the strange design problem behind companion robots, social humanoids, and the next wave of home assistants. People naturally look for eyes, mouths, attention, and emotion. A robot that gives you enough social signals can feel approachable. A robot that gives you almost-human signals but misses the timing, movement, or emotional detail can land in the uncanny valley: close enough to human to raise expectations, not good enough to satisfy them.

For buyers, the question is not whether a robot has a face. The better question is: what job is that face doing? Is it helping you understand the robot's attention and intent, or is it cosmetic theater that makes failures feel more personal?

The short answer: home robots do not need human faces. They need readable signals. Sometimes that means a friendly screen. Sometimes it means pet-like eyes. Sometimes it means no face at all.

Do home robots need human faces?

No. A human-looking face is useful only when the robot's behavior can support the promise the face makes.

A face creates a social contract. If a robot looks at you, smiles, nods, and uses human expressions, you expect it to understand more than a normal appliance. You may expect it to remember context, notice discomfort, pause when confused, and respond with emotional timing. If the robot then misses a command, stares blankly, or moves with awkward delay, the disappointment is stronger because the interface implied a human-like partner.

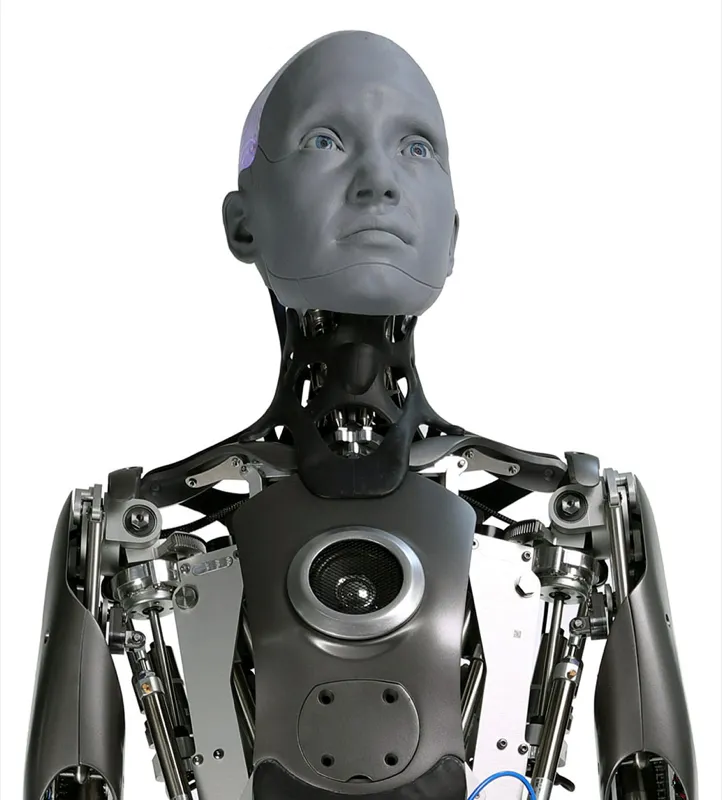

That is why a highly expressive platform like Ameca is fascinating but not a normal home-buying template. ui44 tracks Ameca as a research and public-engagement humanoid: 187 cm tall, 62 kg, stationary in its current upper-body configuration, and built around lifelike facial expressions, automated gaze, face tracking, microphones, cameras, and the Tritium AI stack. It is designed to study and demonstrate human-robot interaction. It is not a $500 kitchen helper.

At home, restraint often works better. A simple set of eyes can show wake state, attention, apology, or confusion without pretending to be a person. A screen can say "I heard you" or "I need help" more clearly than a rubber smile. A pet-like body can invite affection without making users compare it to a human caregiver.

The uncanny valley problem in plain English

The uncanny valley is the dip in comfort that happens when something looks almost human but not quite right. The idea goes back to Masahiro Mori's 1970 essay, but the buyer-relevant version is simple: the more human a robot looks, the more human its timing and behavior must feel.

Recent robotics commentary keeps returning to the same causes. People are sensitive to tiny mismatches in faces, gaze, voice, and motion. A robot can look polite in a still photo and feel unsettling when its eyes lag, its face holds an expression too long, or its body moves with the wrong rhythm.

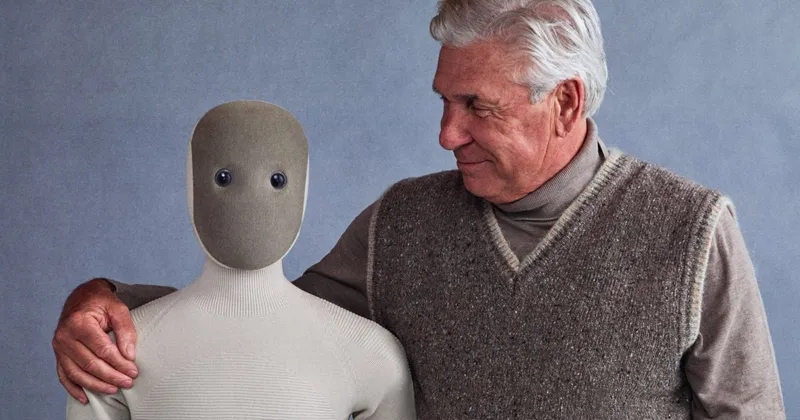

This matters because home robots are intimate. A warehouse robot can look weird and still be useful if it moves boxes safely. A companion robot sits in a living room, talks to a child, checks on an older adult, or follows someone down a hallway. The face is not decoration there; it changes how people interpret the whole machine.

A good rule: if the robot cannot understand a social situation, it should not look like it fully understands one.

Four face strategies in real home robots

The home-robot market already shows four different answers.

1. Realistic humanoid faces: powerful, risky, expensive

Realistic faces are best for research, reception, entertainment, therapy studies, and controlled deployments where human-robot interaction is the main point. They are not automatically best for chores.

Ameca is the clearest example in the ui44 database. It has a deliberately human-like head, expressive eyes and mouth, face tracking, and natural conversation capabilities. That makes it memorable. It also means every small mismatch is visible. If a robot looks this social, people expect social competence.

Pepper took a softer version of the humanoid-face path. It is 120 cm tall, weighs 29.6 kg, uses a wheeled omnidirectional base, has a 10.1-inch touch display on its chest, and was built for emotion recognition, conversation, retail, reception, education, and elder-care assistance. Pepper became one of the best-known social robots, with roughly 27,000 units manufactured before production was paused in 2021. That history is a useful caution: a charming humanoid interface can open doors, but long-term usefulness still depends on software, deployment economics, support, and task fit.

Human faces work when the robot is primarily there for interaction. They are a liability when they imply emotional understanding the system cannot deliver.

2. Screen faces: clear, flexible, less creepy

Screen faces are a practical compromise. They can show eyes, expressions, messages, video calls, menus, and status. They can also stop pretending when text is clearer than emotion.

Amazon Astro is a screen-faced home robot rather than a humanoid. ui44 tracks it at $1,599.99 by invitation, 44 cm tall, 9.35 kg, with a 10.1-inch screen, Alexa, Visual ID, home patrol, Ring integration, and a 1080p periscope camera. The official Astro page emphasizes home monitoring, following, alerts, and privacy controls such as turning off microphones, cameras, and motion with one button. Its face-like interface supports attention and communication, but the product promise is still anchored in monitoring and Alexa mobility, not human resemblance.

Miko 3 uses a screen face for a different audience: children. It is a 22 cm, 0.9 kg companion robot with a 4.46-inch display, wide-angle HD camera, dual microphones, time-of-flight sensing, face and voice recognition, educational games, stories, and parental controls. The official Miko materials stress kid-safe AI, COPPA compliance, camera/mic controls, and moderated conversations. In that context, expressive graphics are safer than a realistic child-like face because they create warmth without pretending to be a peer.

Misty II and QTrobot also show why screen faces work for education and research. Misty II uses customizable eyes, voice, and motion on an open robotics platform. QTrobot uses facial-expression based interaction for research and teaching, including autism and social-emotional intervention contexts. A screen can be expressive, testable, and adjustable in a way a fixed humanoid face is not.

3. Pet-like faces: affection without personhood

Pet-like robots sidestep the human-face trap. They invite care, touch, and routine, but they do not ask the user to believe the robot is a miniature person.

LOVOT is one of the strongest examples. GROOVE X describes its goal plainly: create a robot that makes people happy, not a convenience machine. ui44 tracks the current LOVOT 3.0 at ¥577,500 in Japan, with a required monthly care plan from ¥9,900. It is 43 cm tall, weighs 4.6 kg, runs for 30-45 minutes before returning to its nest, and uses over 50 sensors, touch response, person-recognition behavior, thermal person detection, room mapping, and OLED eyes.

That design says "care for me" more than "I am a human assistant." The eyes are large and readable, not realistic. The body is small and soft-looking. The value comes from emotional ritual: greeting, being held, responding to touch, and developing a recognizable personality over time.

Sony aibo takes the same idea through a dog form. The current ERS-1000 is $2,899.99 in the US with a subscription plan, 29.3 cm tall, 2.2 kg, and about two hours of battery life. It has OLED eyes, 22 axes of movement, face recognition for up to 100 faces, over 100 voice commands, autonomous charging, touch sensors, cameras, microphones, ToF sensors, and personality development. It does not need a human smile because a dog-like interaction model is already familiar.

AIST PARO is the clinical version of this pattern. The baby seal form avoids realistic human expectations while supporting therapeutic companionship through touch, sound, posture, and learned responses. In elder-care or dementia contexts, a non-human form can be less demanding and less socially confusing than a near-human one.

4. Minimal or faceless robots: task first, personality second

Some robots should not have a face because the task does not need one. A robot that carries laundry, patrols, opens a door, or picks up objects needs readable intent: where it is going, whether it sees you, whether it is paused, and whether it needs help. It does not always need eyes and a mouth.

1X NEO is interesting because it is a full humanoid home robot without a realistic human face. ui44 tracks NEO at $20,000 for early adopters, 167 cm tall, 30 kg, roughly four hours of battery life, with RGB cameras, depth sensors, tactile skin, microphones, and a soft body designed for safer human interaction. The official 1X page emphasizes chores, Expert Mode for tasks the robot does not yet know, a soft deformable shell, quiet operation, and conversation.

That is a useful home design compromise. NEO has a human-scale body because it needs to operate in rooms built for people. But it does not need a human face to fold laundry or ask for clarification. A minimal head can reduce social pressure while still giving the robot a direction of attention.

Samsung Ballie goes even further away from humanoid expectations. It is a rolling ball-like companion in development, with Gemini, SmartThings integration, home navigation, projector features, pet and family monitoring, reminders, calls, and environmental sensing. Samsung has not announced final pricing or a confirmed release date in the ui44 database. Its face is essentially an interaction style, not an anatomy claim.

A buyer's checklist for robot faces

- Can the robot show uncertainty? A useful face should signal "I did not

- Does the face match the robot's real ability? A realistic social face on

- Can you reduce or disable social features? Households differ. A child may

- Does the face help with safety? Gaze, lights, sounds, and posture should

- Is privacy visible? If the robot has cameras or microphones in its face,

Which face style should you choose?

Here is the practical version:

Robot face style

Realistic humanoid face

- Best fit

- Research, reception, public demos, social-interaction studies

- Watch out for

- High expectations, uncanny motion, expensive support

Robot face style

Screen face

- Best fit

- Kids' robots, telepresence, education, home monitoring, status feedback

- Watch out for

- Too much screen time, weak privacy controls, subscription lock-in

Robot face style

Pet-like face

- Best fit

- Companionship, dementia care, loneliness support, family rituals

- Watch out for

- Emotional overclaiming, recurring care plans, limited utility

Robot face style

Minimal/faceless body

- Best fit

- Chores, patrol, carrying, manipulation, whole-home assistance

- Watch out for

- Harder to read intent unless lights, voice, or posture are well designed

| Robot face style | Best fit | Watch out for |

|---|---|---|

| Realistic humanoid face | Research, reception, public demos, social-interaction studies | High expectations, uncanny motion, expensive support |

| Screen face | Kids' robots, telepresence, education, home monitoring, status feedback | Too much screen time, weak privacy controls, subscription lock-in |

| Pet-like face | Companionship, dementia care, loneliness support, family rituals | Emotional overclaiming, recurring care plans, limited utility |

| Minimal/faceless body | Chores, patrol, carrying, manipulation, whole-home assistance | Harder to read intent unless lights, voice, or posture are well designed |

If the robot's main job is emotional companionship, a pet-like or stylized face is often safer than a realistic one. If the main job is utility, choose a robot whose body and signals make its next action obvious. If the main job is conversation, do not judge only the face; judge memory, interruption handling, privacy, and whether it admits confusion.

The bottom line

The best home robot face is not the most human one. It is the one that sets the right expectation.

A robot like Ameca proves how compelling lifelike expression can be. Robots like LOVOT, aibo, Miko, Astro, Ballie, and NEO show why consumer designs often avoid full realism. They use eyes, screens, bodies, motion, voice, and softness to communicate without claiming too much.

That is the lesson for buyers: do not buy the face. Buy the behavior behind it. A home robot that looks slightly less human but communicates clearly, respects privacy, and knows when to ask for help will usually be easier to live with than one that smiles beautifully and fails mysteriously.

Database context

Use this article as a privacy verification workflow

Turn the article into a real verification pass

Do Home Robots Need Human Faces? already points you toward 11 linked robots, 11 manufacturers, and 7 countries inside the ui44 database. That matters because strong buyer guidance is easier to apply when you can move immediately from a claim or warning into concrete product pages, manufacturer directories, component explainers, and country-level context instead of treating the article as an isolated opinion piece. The fastest next step is to turn the article into a shortlist workflow: open the linked robot pages, verify which specs are actually published for those models, then compare the surrounding manufacturer and component context before you decide whether the underlying claim changes your buying plan.

For this topic, the useful discipline is to separate the editorial lesson from the catalog evidence. The article gives you the framing, but the robot pages tell you what each product actually ships with today: sensor stack, connectivity methods, listed price, release timing, category, and support-relevant compatibility notes. The manufacturer pages then show whether you are looking at a one-off launch, a broader lineup pattern, or a company that spans multiple categories. That layered workflow reduces the risk of buying on a single marketing phrase or a single support FAQ.

Use the robot pages to confirm which products actually expose cameras, microphones, Wi-Fi, or voice systems, then use the manufacturer pages to decide how much of the privacy question seems product-specific versus brand-wide. On this route cluster, Ameca, Pepper, and Astro form the fastest reality check. If you want a quick working shortlist, open Compare Ameca, Pepper, and Astro next, then keep this article open as the reasoning layer while you compare structured data side by side.

Practical Takeaway

Every robot, manufacturer, category, component, and country reference below resolves to a real ui44 page, keeping the follow-up path grounded in database records rather than generic advice.

Suggested next steps in ui44

- Open Ameca and note the listed sensors, connectivity methods, and voice stack before you interpret any policy claim.

- Cross-check the wider brand context on Engineered Arts so you can see whether the privacy question touches one model or a broader lineup.

- Use the linked component pages to confirm how common the relevant sensors and connectivity layers are across the database.

- Keep a short note of which policy layers you checked, which device features are actually present on the robot page, and which items still depend on region- or app-level confirmation.

- Finish with Compare Ameca, Pepper, and Astro so the policy reading sits next to structured product data.

Database context

Robot profiles worth opening next

Use the linked product pages as the evidence layer

The linked robot pages are where this article becomes operational. Instead of asking whether the headline is interesting, use the robot entries to inspect the actual mix of sensors, connectivity options, batteries, pricing, release timing, and stated capabilities attached to the products mentioned in the article. That is the easiest way to see whether the warning or opportunity described here affects one product family, a specific design pattern, or an entire buying lane.

Ameca

Engineered Arts · Research · Active

Ameca is tracked on ui44 as a active research robot from Engineered Arts. The database currently records a listed price of Price TBA, a release date of 2021, Not disclosed battery life, Not disclosed charging time, and a published stack that includes 2 × 8 MP eye-mounted cameras, 2 binaural ear microphones, and 4-channel chest microphone plus RJ45 Ethernet and Wi-Fi (coming soon/custom).

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Ameca combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Lifelike Facial Expressions, Natural Conversation, and Gesture Recognition with any cloud, app, or voice layers.

Pepper

Aldebaran Robotics · Commercial · Available

Pepper is tracked on ui44 as a available commercial robot from Aldebaran Robotics. The database currently records a listed price of Price TBA, a release date of 2014-06, ~12 hours (shop use) battery life, ~8 hours 20 minutes charging time, and a published stack that includes RGB Camera ×2 (forehead + mouth), 3D Depth Sensor, and Microphone ×4 plus Wi-Fi 802.11 a/b/g/n (2.4/5 GHz) and Ethernet.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Pepper combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Emotion Recognition, Facial Expression Analysis, and Natural Conversation with any cloud, app, or voice layers, including Multilingual Speech Recognition & Synthesis.

Astro is tracked on ui44 as a active security & patrol robot from Amazon. The database currently records a listed price of $1,599, a release date of 2021, Not officially disclosed battery life, Not officially disclosed charging time, and a published stack that includes 5MP Bezel Camera, 1080p Periscope Camera (132° FOV), and Infrared Vision plus Wi-Fi 802.11ac and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Astro combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Autonomous Home Patrol, Visual ID (face recognition), and Remote Home Monitoring with any cloud, app, or voice layers, including Amazon Alexa.

Miko 3 is tracked on ui44 as a available companions robot from Miko. The database currently records a listed price of $299, a release date of 2022, 5–7 hours active use, up to 12 hours standby battery life, ~4 hours (15W USB-C adapter) charging time, and a published stack that includes Time-of-Flight Range Sensor, Odometric Sensors, and Dual MEMS Microphones plus Wi-Fi and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Miko 3 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as AI-Powered Conversations, Face Recognition, and Voice Recognition with any cloud, app, or voice layers.

Misty II

Misty Robotics · Companions · Available

Misty II is tracked on ui44 as a available companions robot from Misty Robotics. The database currently records a listed price of €17.067, a release date of 2018, Up to 2 hours (max) or 30–60 minutes (heavy use) battery life, Not publicly specified charging time, and a published stack that includes 3D Occipital sensor (mapping), 4K Sony camera, and Eight time-of-flight sensors plus Wi-Fi.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Misty II combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Autonomous navigation, Dynamic obstacle response, and 3D mapping with any cloud, app, or voice layers.

Database context

Manufacturer context behind the article

Check whether this is one product story or a broader company pattern

Manufacturer pages add the privacy context that individual product pages cannot show on their own. They help you check whether cameras, microphones, cloud accounts, app controls, and policy assumptions appear across a broader lineup or stay tied to one specific product story.

Engineered Arts

ui44 currently tracks 1 robot from Engineered Arts across 1 category. The company is grouped under UK, and the current catalog footprint on ui44 includes Ameca.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Research as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Aldebaran Robotics

ui44 currently tracks 1 robot from Aldebaran Robotics across 1 category. The company is grouped under France, and the current catalog footprint on ui44 includes Pepper.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Commercial as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Amazon

ui44 currently tracks 1 robot from Amazon across 1 category. The company is grouped under USA, and the current catalog footprint on ui44 includes Astro.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Security & Patrol as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Miko

ui44 currently tracks 2 robots from Miko across 1 category. The company is grouped under India, and the current catalog footprint on ui44 includes Miko 3, Miko Mini.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Companions as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Database context

Broaden the scan without leaving the database

Categories, components, and countries add the wider context

Category framing

Category pages are useful when the article touches a buying pattern that shows up across brands. A category route helps you confirm whether the linked products sit in a narrow niche or whether the same question should be tested across a larger field of alternatives.

Research

The Research category page currently groups 23 tracked robots from 18 manufacturers. ui44 describes this lane as: Academic and research robotics platforms pushing the boundaries of what machines can learn and do.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include HRP-4C, HRP-5P, NAO6.

Commercial

The Commercial category page currently groups 23 tracked robots from 20 manufacturers. ui44 describes this lane as: Delivery robots, warehouse automation, hospitality service bots, and other robots built for business operations.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include aeo, Pepper, ANYmal D.

Country and ecosystem context

Country pages give extra context when support practices, launch sequencing, regulatory posture, or manufacturer mix matter. They are not a substitute for model-level verification, but they do help you see which ecosystems cluster together and which manufacturers sit in the same regional field when you broaden the search beyond the article headline.

UK

The UK route currently groups 1 tracked robots from 1 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Engineered Arts make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

France

The France route currently groups 5 tracked robots from 4 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Pollen Robotics, Aldebaran / Maxtronics, Aldebaran Robotics make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

USA

The USA route currently groups 16 tracked robots from 12 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Boston Dynamics, Figure AI, Tesla make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

Database context

Questions to answer before you move from reading to buying

A follow-up FAQ built from the entities already linked in this article

Frequently Asked Questions

Which page should I open first after reading “Do Home Robots Need Human Faces?”?

Start with Ameca. That gives you a concrete product anchor for the article’s main claim. From there, branch into the manufacturer and component pages so you can tell whether the article is describing one specific model, a repeated brand pattern, or a wider technology issue that affects multiple shortlist options.

How do the manufacturer pages change the buying decision?

Engineered Arts help you zoom out from one article and one product. On ui44 they show lineup breadth, category spread, and the neighboring robots tied to the same company. That context is useful when you are deciding whether a risk belongs to a single model, whether it shows up across a brand’s portfolio, and whether you should keep looking at alternatives before committing.

When should I switch from reading to side-by-side comparison?

Move into Compare Ameca, Pepper, and Astro as soon as you understand the article’s main warning or promise. The article explains what to watch for, but the compare view is where you can check whether price, status, battery life, connectivity, sensors, and category fit still make the robot a good match for your own home and budget.

Database context

Where to go next in ui44

Keep the research chain inside the database

If you want to keep going, these follow-on pages give you the cleanest expansion path from article to research session. Open the comparison route first if you are deciding between products today. Open the manufacturer, category, and component routes if you still need to understand the broader pattern behind the claim.

Written by

ui44 Team

Published April 30, 2026

Share this article

Open a plain share link on X or Bluesky. No embeds, no widgets, no cookie baggage.