A home robot that can learn chores from YouTube sounds like the cleanest possible answer to the home-robot problem. Instead of hiring teleoperators, building motion-capture studios, or hand-programming every drawer, pan, and towel, the robot would watch a video and copy the useful parts.

That idea moved from sci-fi pitch to practical buyer question after Donut Robotics showed its compact cinnamon mini humanoid at SusHi Tech Tokyo 2026. Nikkei reported a 130 cm, roughly 35 kg robot with an expected price around ¥12 million, and quoted the company saying its VLA system can learn from YouTube-like video sources. Donut's own PR Times release says cinnamon mini's advantage is learning motion from video rather than conventional motion capture.

The claim is worth taking seriously, but not literally enough to imagine your future robot watching one cooking tutorial and safely making dinner that night. Video learning is one ingredient in a much bigger stack: robot demonstrations, action labels, safety constraints, real-world failures, and hardware that can actually repeat the motion.

Can a robot really learn a chore from a YouTube video?

In the narrow sense, yes: a robot-learning model can use ordinary video as training signal. Video contains objects, hand motions, timing, tool use, and the sequence of steps in a task. A model can learn that a towel is pinched at the corner, that a pan is tilted before food slides, or that a drawer is pulled before an object is placed inside.

In the buyer-relevant sense, not by itself. A YouTube video usually does not contain the data a robot controller needs:

- the camera calibration behind the shot;

- the exact 3D position of the hand and objects;

- joint angles, motor commands, and gripper force;

- failed attempts that were edited out;

- force and tactile information when something is touched;

- whether the motion is safe for a specific robot body in a specific room.

That is why the best phrase is not "learn from YouTube" but video-assisted skill learning. Public video can help a robot understand what a task looks like. It does not automatically tell a 30 kg humanoid how hard to pull a stuck cabinet handle, how to avoid a child walking nearby, or what to do when the object is heavier than expected.

Donut Robotics is interesting because it is not only talking about video as marketing. The company says cinnamon mini learns motion from video instead of motion capture, while its product page for the larger cinnamon 1 describes a Japanese-brand bipedal humanoid with VLM capability, in-house VLA work, silent gesture control, and factory/construction-site positioning. That makes the claim more concrete than a generic AI slogan — but it is still early, expensive, and not a consumer home product.

What has already worked in real homes?

The strongest public evidence for home video learning is not YouTube. It is Dobb-E, an open-source household manipulation project built around Hello Robot's Stretch platform.

Dobb-E collected demonstrations in real New York apartments using a simple tool: a reacher-grabber stick with an iPhone and 3D-printed parts. The project reports a Homes of New York dataset with 22 homes, 216 environments, 5,620 trajectories, 13 hours of interaction, and 1.5 million frames. In deployment, it attempted 109 tasks across 10 NYC homes, reporting an 81% success rate and about 20 minutes to learn a new task.

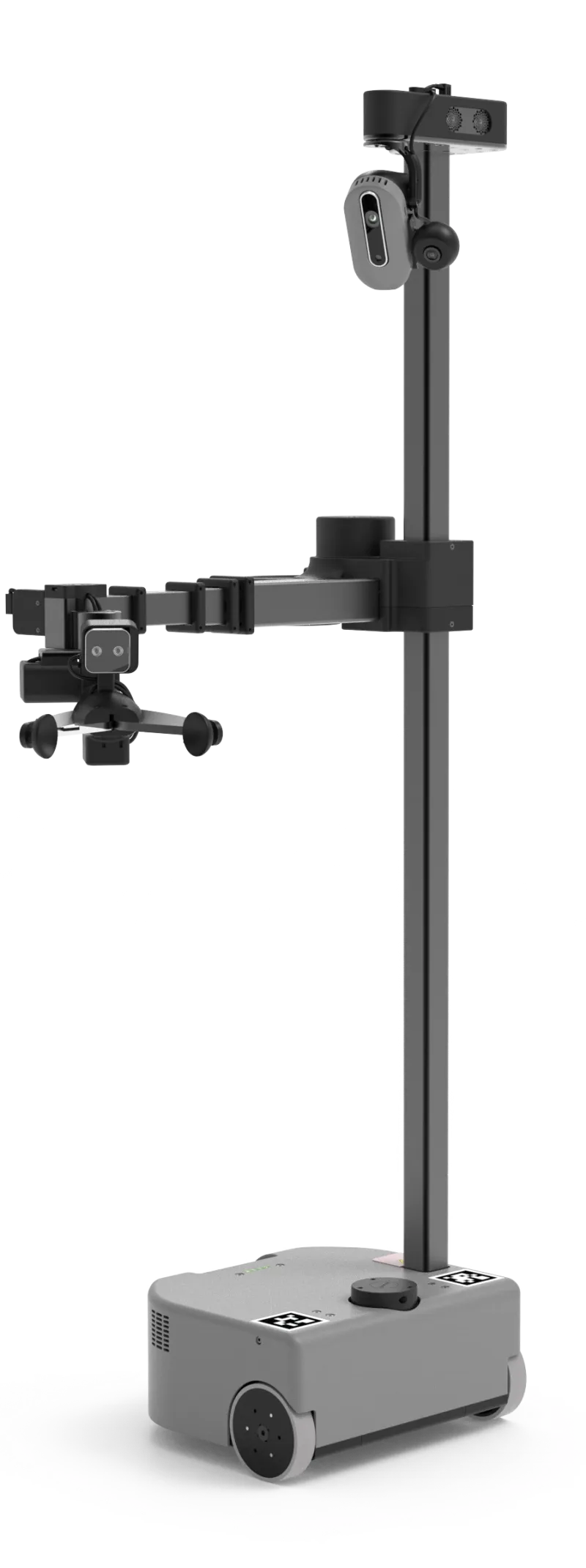

That is exactly why Hello Robot Stretch 3 matters in the ui44 database even though it does not look like a sci-fi humanoid. Stretch 3 is a $24,950 mobile manipulator with a 141 cm overall height, 24.5 kg weight, RGB-D cameras, LiDAR, ROS 2 support, a Python SDK, and a 2 kg payload. It is built around reachable household manipulation, not athletic walking.

Dobb-E's lesson for buyers is practical: video learning becomes more believable when the video is collected for the robot-learning problem. The Stick captured RGB and depth video with action annotations for the gripper pose and opening. That is very different from a cooking channel video shot for humans, where the camera angle may hide the hand, skip the failed first attempt, or cut between steps.

Why do robotics labs still need huge robot datasets?

Because the same chore can look easy in a video and be hard for a robot body.

Open X-Embodiment is a useful scale marker. The collaboration assembled 1M+ real robot trajectories across 22 robot embodiments, pooling 60 datasets from 34 labs and covering 527 skills. Its RT-X models showed positive transfer across robots, including a reported 50% improvement for RT-1-X in small-data settings and 3x better emergent-skill performance for RT-2-X compared with RT-2 in the project evaluation.

That scale helps explain why one viral robot demo is not enough. A home robot has to generalize across different counters, cabinets, lighting, flooring, object sizes, and human interruptions. A video model can learn broad concepts from internet video, but the action policy still has to be grounded in a robot's own sensors and actuators.

Google DeepMind's Gemini Robotics announcement makes the same point from another direction. Gemini Robotics is a VLA model: it adds physical actions as an output modality so a model can turn visual/language understanding into robot control. DeepMind emphasizes generality, interactivity, dexterity, and adaptation across multiple embodiments. It also notes that Gemini Robotics was trained primarily on the ALOHA 2 bi-arm platform and then demonstrated on other robots, including Franka-style arms and Apptronik's Apollo humanoid.

The pattern is clear: the field is moving toward general robot brains, but those brains are still trained and validated on robot-specific data. YouTube can be part of the pretraining diet. It is not a substitute for contact-rich household trials.

Which home robots are closest to benefiting?

The robots most likely to benefit from video-based skill learning are not necessarily the most human-looking ones. They need cameras, manipulation hardware, enough local compute, safe control loops, and a learning pipeline that records successes and failures.

1X NEO is the obvious home-focused example. ui44 tracks NEO as a $20,000 pre-order humanoid, 167 cm tall, 30 kg, with about 4 hours of battery life, RGB cameras, depth sensors, tactile skin, and a soft body designed for human coexistence. 1X says Redwood can jointly control locomotion and manipulation, navigate to objects of interest, handle never-before-seen objects in unique locations, and train on both successes and failures.

That is the right direction for a robot that might learn from video. The important buyer question is how much of the learning happens before shipment, how much can happen inside a real home, and where human teleoperation or "Expert Mode" still enters the loop.

Figure 03 is not a consumer purchase today, but it is a useful comparison point. Figure's current public copy is home-oriented — “the future of home help,” a general-purpose humanoid for every day, and Helix AI for unpredictable home environments — while ui44 still tracks no public consumer price or order path. The database tracks Figure 03 as a 173 cm, 61 kg humanoid with Helix VLA, stereo/depth vision, force sensors, tactile arrays, and a 20 kg payload. Those hardware signals — tactile sensing, force feedback, dexterous manipulation — are exactly what video-only learning lacks.

Unitree G1 and Unitree R1 show the opposite side of the market. G1 starts at $13,500 and exposes depth sensing, 3D LiDAR, optional dexterous hands, and optional Jetson Orin compute on EDU versions. R1 starts from $4,900 as a pre-order and is much more accessible, but ui44 treats it as locomotion-first: cartwheels, handstands, push recovery, voice/image interaction, and optional hands on EDU rather than a finished household chore worker.

RobotEra STAR1 has stronger manipulation hardware claims: 171 cm, 63 kg, 55 degrees of freedom, 12-DOF dexterous hands, a 20 kg payload, and ERA-42 embodied AI. Its public target applications include manufacturing, logistics, commercial services, and home care. That makes it relevant to video-learning claims, but the buyer should still ask for evidence of repeatable home tasks, not just high-DOF hands.

Robot

- ui44 database signal

- $24,950, RGB-D cameras, LiDAR, ROS 2/Python SDK, 2 kg payload

- What it means for video-learned chores

- Best public research bridge between home demonstrations and actual household manipulation.

Robot

- ui44 database signal

- $20,000 pre-order, soft 167 cm humanoid, RGB/depth, tactile skin, Redwood VLA

- What it means for video-learned chores

- Strongest consumer-facing humanoid angle, but autonomy boundaries still matter.

Robot

- ui44 database signal

- Helix VLA, force sensors, tactile arrays, 20 kg payload, no public price

- What it means for video-learned chores

- Good evidence of the hardware needed for contact-rich learning, not a home buy today.

Robot

- ui44 database signal

- $13,500 research humanoid, optional dexterous hands and Jetson Orin

- What it means for video-learned chores

- Useful platform for experimentation; not packaged as a chore-learning appliance.

Robot

- ui44 database signal

- 55 DoF, 12-DOF hands, ERA-42 AI, home-care claims

- What it means for video-learned chores

- Interesting hardware, but needs public proof of household task reliability.

| Robot | ui44 database signal | What it means for video-learned chores |

|---|---|---|

| Hello Robot Stretch 3 | $24,950, RGB-D cameras, LiDAR, ROS 2/Python SDK, 2 kg payload | Best public research bridge between home demonstrations and actual household manipulation. |

| 1X NEO | $20,000 pre-order, soft 167 cm humanoid, RGB/depth, tactile skin, Redwood VLA | Strongest consumer-facing humanoid angle, but autonomy boundaries still matter. |

| Figure 03 | Helix VLA, force sensors, tactile arrays, 20 kg payload, no public price | Good evidence of the hardware needed for contact-rich learning, not a home buy today. |

| Unitree G1 | $13,500 research humanoid, optional dexterous hands and Jetson Orin | Useful platform for experimentation; not packaged as a chore-learning appliance. |

| RobotEra STAR1 | 55 DoF, 12-DOF hands, ERA-42 AI, home-care claims | Interesting hardware, but needs public proof of household task reliability. |

What should a buyer ask before trusting a video-learning claim?

Start with the demo format. If a company says the robot learned from video, ask whether the video was ordinary public footage, a robot-collected demonstration, a teleoperated rollout, simulation, or a curated internal dataset. Those are not the same thing.

Then ask what happened after the robot watched the video. Did it perform the task in the same room or a new room? With the same object or a different object? Was the attempt uncut? How many failures were edited out? Did a remote operator intervene? Was the result autonomous or shared autonomy?

For home robots, the most useful evidence looks boring:

- Uncut repeated attempts. One perfect clip is weaker than ten messy attempts with recovery.

- New-object tests. The robot should handle a different mug, towel, box, or handle than the one in training.

- New-room tests. A robot that only works in a lab kitchen has not learned a home chore.

- Contact sensing. Force, tactile, depth, or compliant control matters when the robot touches the world.

- Failure recovery. The robot should notice a missed grasp, slow down, retry, or ask for help.

- Clear autonomy labels. Teleoperation is useful, but it should not be hidden inside an autonomy demo.

- Privacy explanation. If your robot learns from your home videos, who stores them, who reviews them, and how are they deleted?

This is where a database view helps. A robot with only cameras and vague "AI" language should be treated differently from a robot with disclosed sensors, payload, battery life, local compute, and a credible learning pipeline. Hardware specs do not guarantee autonomy, but missing specs make autonomy claims harder to trust.

Will home robots eventually watch tutorials like people do?

Probably, but as one layer rather than the whole learning loop.

A useful home robot might watch a cooking or cleaning video to understand the sequence: open the drawer, take the cloth, wipe the spill, rinse the cloth, put it back. Then it would ground that sequence in its own map, its gripper, its force limits, and the objects in your house. It might ask a person to demonstrate once, use a few teleoperated corrections, compare against fleet experience from other homes, and only then attempt the chore autonomously.

That future is more plausible than hand-programming every household action. It is also slower than the headline version. Homes are varied, messy, private, and full of edge cases. Robots do not get to pause reality while they infer the next step. They have to move safely in contact with people and fragile things.

So the answer is: yes, video learning matters. Donut Robotics' cinnamon mini claim is a useful signal that humanoid companies see video as a shortcut around expensive motion capture. Dobb-E shows home demonstrations can teach real manipulation tasks with surprisingly little task-specific data. Open X-Embodiment and Gemini Robotics show why large, cross-robot datasets and VLA models are becoming central.

But if you are buying a home robot, do not ask only whether it can "learn from YouTube." Ask what data the robot actually learns from, what it can do without a hidden human, and what happens when the first attempt fails.

The bottom line

YouTube-style video may help home robots learn faster, especially for understanding the order and intent of household chores. It is not magic autonomy. The robot still needs action labels, embodiment-specific training, contact sensing, safety rules, and real household practice.

For now, the most credible path is hybrid: internet-scale video for broad understanding, structured home demonstrations for real objects, robot fleet logs for failures, and cautious local control for safety. That is less glamorous than "watch one tutorial and do the chore," but it is the path that could actually make home robots useful.

Database context

Use this article as a privacy verification workflow

Turn the article into a real verification pass

Can Home Robots Learn Chores From YouTube? already points you toward 6 linked robots, 6 manufacturers, and 3 countries inside the ui44 database. That matters because strong buyer guidance is easier to apply when you can move immediately from a claim or warning into concrete product pages, manufacturer directories, component explainers, and country-level context instead of treating the article as an isolated opinion piece. The fastest next step is to turn the article into a shortlist workflow: open the linked robot pages, verify which specs are actually published for those models, then compare the surrounding manufacturer and component context before you decide whether the underlying claim changes your buying plan.

For this topic, the useful discipline is to separate the editorial lesson from the catalog evidence. The article gives you the framing, but the robot pages tell you what each product actually ships with today: sensor stack, connectivity methods, listed price, release timing, category, and support-relevant compatibility notes. The manufacturer pages then show whether you are looking at a one-off launch, a broader lineup pattern, or a company that spans multiple categories. That layered workflow reduces the risk of buying on a single marketing phrase or a single support FAQ.

Use the robot pages to confirm which products actually expose cameras, microphones, Wi-Fi, or voice systems, then use the manufacturer pages to decide how much of the privacy question seems product-specific versus brand-wide. On this route cluster, Stretch 3, NEO, and Figure 03 form the fastest reality check. If you want a quick working shortlist, open Compare Stretch 3, NEO, and Figure 03 next, then keep this article open as the reasoning layer while you compare structured data side by side.

Practical Takeaway

Every robot, manufacturer, category, component, and country reference below resolves to a real ui44 page, keeping the follow-up path grounded in database records rather than generic advice.

Suggested next steps in ui44

- Open Stretch 3 and note the listed sensors, connectivity methods, and voice stack before you interpret any policy claim.

- Cross-check the wider brand context on Hello Robot so you can see whether the privacy question touches one model or a broader lineup.

- Use the linked component pages to confirm how common the relevant sensors and connectivity layers are across the database.

- Keep a short note of which policy layers you checked, which device features are actually present on the robot page, and which items still depend on region- or app-level confirmation.

- Finish with Compare Stretch 3, NEO, and Figure 03 so the policy reading sits next to structured product data.

Database context

Robot profiles worth opening next

Use the linked product pages as the evidence layer

The linked robot pages are where this article becomes operational. Instead of asking whether the headline is interesting, use the robot entries to inspect the actual mix of sensors, connectivity options, batteries, pricing, release timing, and stated capabilities attached to the products mentioned in the article. That is the easiest way to see whether the warning or opportunity described here affects one product family, a specific design pattern, or an entire buying lane.

Stretch 3

Hello Robot · Home Assistants · Active

Stretch 3 is tracked on ui44 as a active home assistants robot from Hello Robot. The database currently records a listed price of $24,950, a release date of 2024, 2–5 hours battery life, Not disclosed charging time, and a published stack that includes Intel D405 RGBD Camera (gripper), Intel D435if RGBD Camera (head), and Wide-Angle RGB Camera (head) plus Wi-Fi and Ethernet.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Stretch 3 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Mobile Manipulation, Autonomous Navigation, and Teleoperation (Web / Gamepad / Dexterous) with any cloud, app, or voice layers.

NEO

1X Technologies · Humanoid · Pre-order

NEO is tracked on ui44 as a pre-order humanoid robot from 1X Technologies. The database currently records a listed price of $20,000, a release date of 2025-10-28, ~4 hours battery life, Not disclosed charging time, and a published stack that includes RGB Cameras, Depth Sensors, and Tactile Skin plus Wi-Fi and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether NEO combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Household Chores, Tidying Up, and Safe Human Interaction with any cloud, app, or voice layers.

Figure 03 is tracked on ui44 as a active humanoid robot from Figure AI. The database currently records a listed price of Price TBA, a release date of 2025-10-09, ~5 hours battery life, Not disclosed charging time, and a published stack that includes Stereo Vision, Depth Cameras, and Force Sensors plus Wi-Fi and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Figure 03 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Complex Manipulation, Warehouse Work, and Manufacturing Tasks with any cloud, app, or voice layers.

G1 is tracked on ui44 as a available humanoid robot from Unitree. The database currently records a listed price of $13,500, a release date of 2024, ~2 hours battery life, Not disclosed charging time, and a published stack that includes Depth Camera, 3D LiDAR, and 4 Microphone Array plus Wi-Fi 6 and Bluetooth 5.2.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether G1 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Bipedal Walking, Object Manipulation, and Dexterous Hands (optional Dex3-1) with any cloud, app, or voice layers.

R1

Unitree Robotics · Humanoid · Pre-order

R1 is tracked on ui44 as a pre-order humanoid robot from Unitree Robotics. The database currently records a listed price of $4,900, a release date of 2025, ~1 hour (mixed activity) battery life, Not officially disclosed charging time, and a published stack that includes Binocular Cameras, 4-Mic Array, and Dual 6-Axis IMU plus Wi-Fi and Bluetooth 5.2.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether R1 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Bipedal Walking & Running, Cartwheels & Handstands, and Push Recovery with any cloud, app, or voice layers, including UnifoLM (voice + image commands).

Database context

Manufacturer context behind the article

Check whether this is one product story or a broader company pattern

Manufacturer pages add the privacy context that individual product pages cannot show on their own. They help you check whether cameras, microphones, cloud accounts, app controls, and policy assumptions appear across a broader lineup or stay tied to one specific product story.

Hello Robot

ui44 currently tracks 1 robot from Hello Robot across 1 category. The company is grouped under USA, and the current catalog footprint on ui44 includes Stretch 3.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Home Assistants as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

1X Technologies

ui44 currently tracks 2 robots from 1X Technologies across 1 category. The company is grouped under Norway, and the current catalog footprint on ui44 includes NEO, EVE.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Figure AI

ui44 currently tracks 2 robots from Figure AI across 1 category. The company is grouped under USA, and the current catalog footprint on ui44 includes Figure 03, Figure 02.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Unitree

ui44 currently tracks 2 robots from Unitree across 1 category. The company is grouped under China, and the current catalog footprint on ui44 includes H1, G1.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Database context

Broaden the scan without leaving the database

Categories, components, and countries add the wider context

Category framing

Category pages are useful when the article touches a buying pattern that shows up across brands. A category route helps you confirm whether the linked products sit in a narrow niche or whether the same question should be tested across a larger field of alternatives.

Home Assistants

The Home Assistants category page currently groups 12 tracked robots from 12 manufacturers. ui44 describes this lane as: Arm-based household helpers — laundry folders, kitchen robots, and mobile manipulators that handle physical tasks at home.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include Robody, Futuring 2 (F2), Stretch 3.

Humanoid

The Humanoid category page currently groups 78 tracked robots from 55 manufacturers. ui44 describes this lane as: Full-size bipedal humanoid robots designed to work alongside humans. From factory floors to household tasks, these machines represent the cutting edge of robotics.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include NEO, EVE, Mornine M1.

Country and ecosystem context

Country pages give extra context when support practices, launch sequencing, regulatory posture, or manufacturer mix matter. They are not a substitute for model-level verification, but they do help you see which ecosystems cluster together and which manufacturers sit in the same regional field when you broaden the search beyond the article headline.

USA

The USA route currently groups 17 tracked robots from 12 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Boston Dynamics, Figure AI, Richtech Robotics make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

Norway

The Norway route currently groups 2 tracked robots from 1 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like 1X Technologies make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

China

The China route currently groups 52 tracked robots from 15 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like AGIBOT, Unitree Robotics, Roborock make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

Database context

Questions to answer before you move from reading to buying

A follow-up FAQ built from the entities already linked in this article

Frequently Asked Questions

Which page should I open first after reading “Can Home Robots Learn Chores From YouTube?”?

Start with Stretch 3. That gives you a concrete product anchor for the article’s main claim. From there, branch into the manufacturer and component pages so you can tell whether the article is describing one specific model, a repeated brand pattern, or a wider technology issue that affects multiple shortlist options.

How do the manufacturer pages change the buying decision?

Hello Robot help you zoom out from one article and one product. On ui44 they show lineup breadth, category spread, and the neighboring robots tied to the same company. That context is useful when you are deciding whether a risk belongs to a single model, whether it shows up across a brand’s portfolio, and whether you should keep looking at alternatives before committing.

When should I switch from reading to side-by-side comparison?

Move into Compare Stretch 3, NEO, and Figure 03 as soon as you understand the article’s main warning or promise. The article explains what to watch for, but the compare view is where you can check whether price, status, battery life, connectivity, sensors, and category fit still make the robot a good match for your own home and budget.

Database context

Where to go next in ui44

Keep the research chain inside the database

If you want to keep going, these follow-on pages give you the cleanest expansion path from article to research session. Open the comparison route first if you are deciding between products today. Open the manufacturer, category, and component routes if you still need to understand the broader pattern behind the claim.

Written by

ui44 Team

Published May 8, 2026

Share this article

Open a plain share link on X or Bluesky. No embeds, no widgets, no cookie baggage.