That is why simulated kitchens are worth watching. If robotics teams can train and test policies in physics-ready kitchens, living rooms, bathrooms, drawers, doors, clutter, and contact-rich chores, simulation becomes more than a demo shortcut. It becomes a filter for which home robot claims deserve attention.

The question for buyers is simple: did this robot practice in something that looks and behaves like my home, or did it only learn a neat table trick?

Why simulated kitchens matter now

Robotics has always used simulation, but the bar is changing. A visual scene of a kitchen is not enough. A useful home-robot simulator needs objects with mass, friction, collision geometry, doors that swing, drawers that slide, cabinet handles that can be missed, and layouts that vary from one apartment to the next.

That is the shift behind tools such as NVIDIA Isaac Sim, which NVIDIA describes as an open-source reference framework for robotics simulation, testing, and synthetic data generation in physically based virtual environments. Isaac Sim can ingest CAD, URDF, and real-world capture data into USD, then let teams assign materials, physics, robot models, sensors, and testing pipelines. Isaac Lab adds a framework for training robot policies at scale across humanoids, manipulators, and mobile robots.

The newer home-relevant piece is the environment layer. Physical Imagine says it is building a world-generation layer for physical AI with 25,000+ real-world products, OpenUSD delivery, sub-millimeter geometry, collision meshes, physics properties, and articulated kitchens, living rooms, bathrooms, and retail scenes. Its kitchen demo exports SimReady USD files that drop into Isaac Sim with joints and collisions intact. The company's own page lists kitchen manipulation environments with opening drawers and cabinets, living-room navigation scenes with semantic labels and nav meshes, and bathroom fixtures with articulated faucet handles and medicine cabinets.

That matters because most household failures are boring and physical. The robot bumps the toe kick. The drawer opens halfway. The gripper pushes a cup instead of closing around it. A towel changes shape. A chair leg blocks the clean path. A policy trained only on videos can recognize the task but still fail when contact forces get weird.

What is VFA, and why is force different from video?

A lot of robot-learning hype is built around VLA: vision-language-action models. The robot sees the scene, receives an instruction, and outputs an action. VLA is important, but homes are not just pixels and words. Homes push back.

Eka Robotics is making that point directly. In WIRED's profile of Eka, the company describes a vision-force-action model that learns from simulation with physics such as mass and inertia, not only camera pixels. Eka's founders say the system learns how motion changes the visual scene and how movement speed, weight, and contact affect objects in the gripper. WIRED's examples include a robot screwing in a light bulb, recovering from awkward grasps on keys and brushes, and placing irregular chicken nuggets into moving containers.

None of that proves a consumer home robot is ready. Eka is not selling a kitchen assistant for your counter. But the framing is useful: if a task depends on contact, the training story should include force, not just video.

For home robots, that difference shows up everywhere:

- Opening a cabinet is a force task, not just a recognition task.

- Picking up a sponge is a deformable-object task, not just a grasp-box task.

- Loading a dishwasher is a collision, reach, and recovery task.

- Folding laundry is a cloth dynamics task.

- Carrying a mug is a slosh, grip, and path-planning task.

- Working near people is a safety and interruption task.

A simulated kitchen cannot solve all of those alone, but it can make weak claims visible. If a company says its robot can do chores but cannot explain whether the robot trained or tested against articulated drawers, varied object placements, contact forces, and layout changes, the claim is probably still early.

Which current robots would benefit most?

Simulation matters most for robots that interact with the home, not just move through it. A map-only robot needs navigation tests. A mobile manipulator needs navigation, reach, grasping, force, recovery, and human-safe behavior.

Here is how the idea maps to robots in the ui44 database:

Robot

- ui44 database snapshot

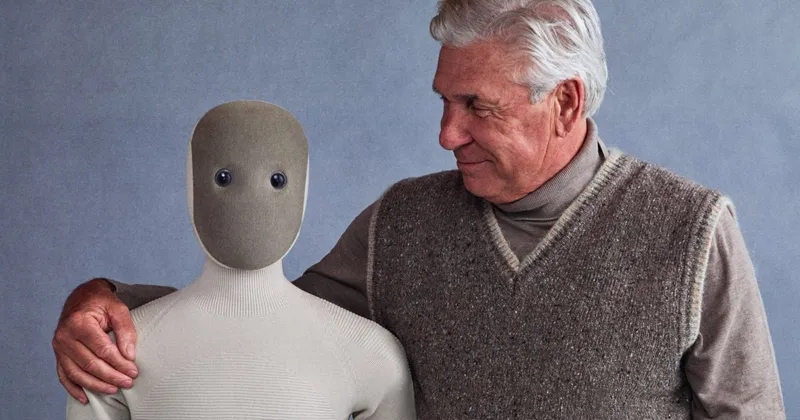

- $20,000 pre-order home humanoid; 167 cm, 30 kg, about 4 hours of battery life; soft body and household-chore positioning

- What simulation needs to prove

- Can it practice full household routines, not only isolated manipulation clips? Buyers need evidence around object recovery, human interruptions, and safe force limits.

Robot

- ui44 database snapshot

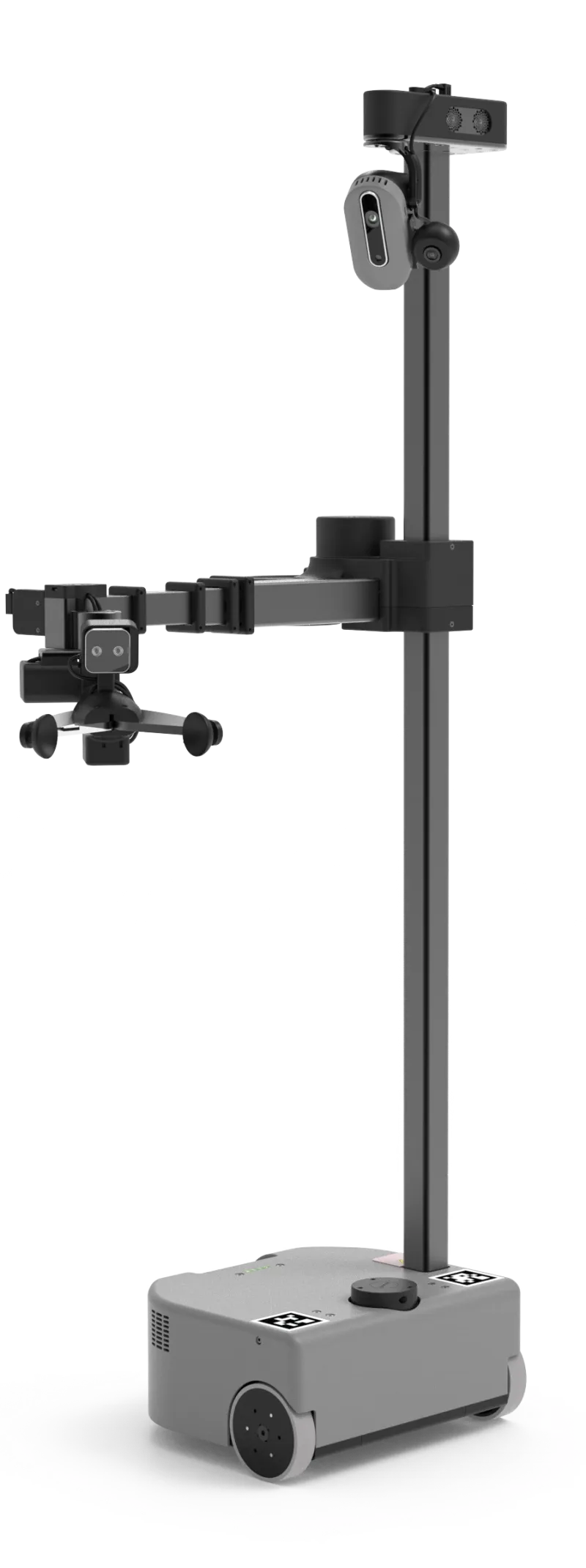

- $29,950 available mobile manipulator; 160 cm tall, 46 kg, 8-hour light-load runtime, ROS 2/Python SDK, 2.5 kg arm payload extended and 4 kg retracted

- What simulation needs to prove

- Can simulated kitchens match its real reach, payload, wrist camera, omnidirectional base, and drawer/cabinet interactions closely enough to improve assistive pilots?

Robot

- ui44 database snapshot

- Active industrial humanoid; no public price; 173 cm, 61 kg, about 5 hours of battery life, Helix VLA, 20 kg payload; not available for consumer purchase

- What simulation needs to prove

- Can factory-trained manipulation transfer into cluttered homes, or does the robot still need a separate household simulation and validation stack?

Robot

- ui44 database snapshot

- $13,500 research humanoid; 132 cm, 35 kg, about 2 hours of battery life; optional dexterous hands and ROS 2 support

- What simulation needs to prove

- Can low-cost research hardware survive enough sim-to-real iteration to become a useful home-development platform rather than a walking demo?

Robot

- ui44 database snapshot

- Research platform around $70,000; 50 kg, two 7-DoF arms, 3 kg payload per arm, ROS 2/Python SDK, Gazebo and MuJoCo support

- What simulation needs to prove

- Can open-source simulation workflows produce repeatable household manipulation benchmarks across labs, not just one-off demos?

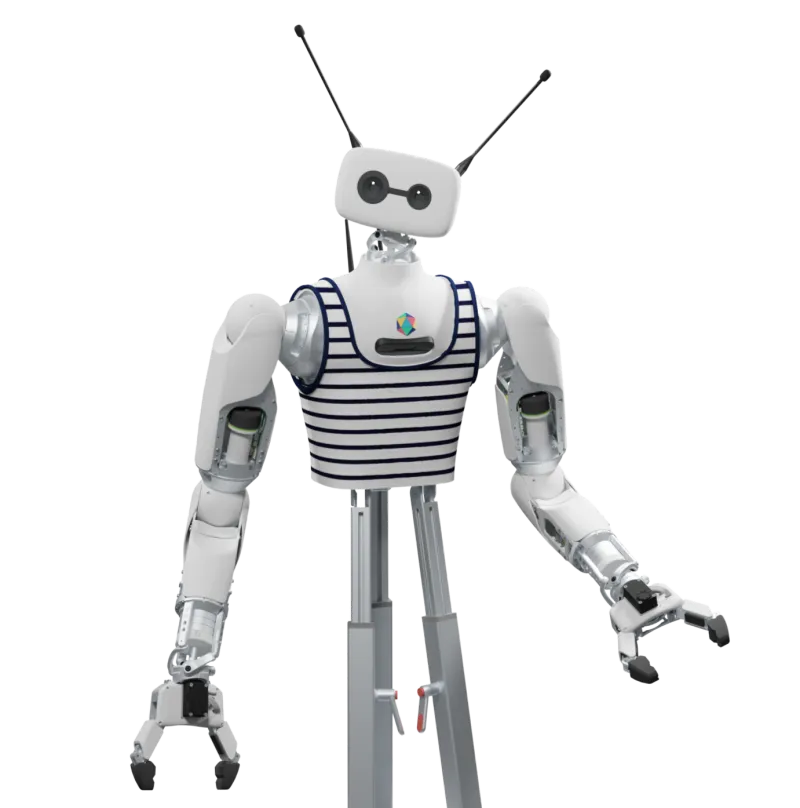

Robot

- ui44 database snapshot

- Coming-soon wheeled household robot concept with official claims around 22 DoF, on-device OmniSense VLA, and chores such as grasping, opening, organizing, laundry, and beverage prep

- What simulation needs to prove

- Can SwitchBot show household-scene training and real-room validation for those chore claims before asking buyers to believe the concept?

| Robot | ui44 database snapshot | What simulation needs to prove |

|---|---|---|

| 1X NEO | $20,000 pre-order home humanoid; 167 cm, 30 kg, about 4 hours of battery life; soft body and household-chore positioning | Can it practice full household routines, not only isolated manipulation clips? Buyers need evidence around object recovery, human interruptions, and safe force limits. |

| Hello Robot Stretch 4 | $29,950 available mobile manipulator; 160 cm tall, 46 kg, 8-hour light-load runtime, ROS 2/Python SDK, 2.5 kg arm payload extended and 4 kg retracted | Can simulated kitchens match its real reach, payload, wrist camera, omnidirectional base, and drawer/cabinet interactions closely enough to improve assistive pilots? |

| Figure 03 | Active industrial humanoid; no public price; 173 cm, 61 kg, about 5 hours of battery life, Helix VLA, 20 kg payload; not available for consumer purchase | Can factory-trained manipulation transfer into cluttered homes, or does the robot still need a separate household simulation and validation stack? |

| Unitree G1 | $13,500 research humanoid; 132 cm, 35 kg, about 2 hours of battery life; optional dexterous hands and ROS 2 support | Can low-cost research hardware survive enough sim-to-real iteration to become a useful home-development platform rather than a walking demo? |

| Reachy 2 | Research platform around $70,000; 50 kg, two 7-DoF arms, 3 kg payload per arm, ROS 2/Python SDK, Gazebo and MuJoCo support | Can open-source simulation workflows produce repeatable household manipulation benchmarks across labs, not just one-off demos? |

| SwitchBot onero H1 | Coming-soon wheeled household robot concept with official claims around 22 DoF, on-device OmniSense VLA, and chores such as grasping, opening, organizing, laundry, and beverage prep | Can SwitchBot show household-scene training and real-room validation for those chore claims before asking buyers to believe the concept? |

The pattern is clear. The more a robot touches the world, the more simulation quality matters. Navigation-only robots can get away with map tests. Manipulators need physics tests.

What should a simulated kitchen include?

A good simulated kitchen is not a pretty 3D render. It is a test harness. For home robots, it should include at least seven things.

1. Articulated objects

Cabinets, drawers, dishwasher racks, trash lids, appliance doors, faucets, and medicine cabinets should open and close with limits. The exact motion matters. If a drawer only exists as a static mesh, the robot is not learning the hard part.

2. Contact and collision data

The robot needs to know when it is pushing, scraping, pinching, sliding, or lifting. Collision meshes, friction, mass, and contact behavior are more useful than visual realism alone. This is where VFA-style thinking becomes practical.

3. Variation at scale

One kitchen layout is a demo. Thousands of variations start to look like training. Counter height, handle style, cabinet finish, object placement, lighting, clutter, rug edges, chair legs, and narrow walkways all need to vary. Physical Imagine's pitch around parametric variation is interesting for exactly this reason: homes are a distribution, not a single room.

4. Hardware-faithful robot models

A simulated robot must match the real robot's reach, camera placement, payload, joint limits, wheelbase, gripper geometry, and blind spots. A policy that works with a perfect virtual arm may fail on a real 2.5 kg payload limit or a wrist camera with occlusion.

5. Failure recovery

The important question is not whether the robot succeeds once. It is what happens after the cup slips, the drawer rebounds, the towel folds over the gripper, or a person walks through the path. Simulated failures should be part of the training distribution.

6. Human-present safety cases

A home robot shares space with people, pets, and fragile objects. Simulation should test yielding, stopping, rerouting, force limits, and ambiguous human motion. It should not only test empty-room efficiency.

7. Real-world validation

Simulation is a pre-flight check, not a replacement for homes. The strongest claims will pair simulated training with real-room results: number of homes, number of task attempts, success rate, human interventions, average completion time, and common failure modes.

The buyer checklist for sim-to-real claims

- What rooms were simulated? A warehouse bin and a kitchen drawer are very

- Were objects articulated? Doors, drawers, knobs, racks, lids, and faucets

- Was contact modeled? Look for force, mass, inertia, friction, collision,

- How much variation was used? One showroom kitchen is not enough.

- Does the simulated robot match the real robot? Reach, payload, gripper

- Were failures trained? Slips, occlusions, blocked paths, partial grasps,

- What transferred to the real world? Ask for real-room success rates and

- What data leaves the home? If real homes are used for continued training,

Where simulation can mislead buyers

Simulation is powerful, but it is also easy to over-sell. A robot can look competent in a high-fidelity virtual kitchen and still fail in your kitchen.

There are several traps.

First, homes contain unknown objects. The 25,000-product library claim from Physical Imagine is impressive, but no library covers every handmade mug, warped cabinet, fraying rug, child's toy, pet bowl, charging cable, or oddly shaped appliance. The long tail is the home.

Second, materials are hard. Cloth, hair, liquids, crumbs, foam, flexible plastic, and overfilled bins do not behave like rigid blocks. Laundry and dishwashing are especially unforgiving because the robot has to deal with soft, slippery, and reflective things.

Third, people change the task while it is happening. A robot may train on a closed drawer and a cup in a known place. A person may open the drawer, move the cup, ask a different question, then step into the robot's path. Home robots need interactive resilience, not just policy playback.

Fourth, simulation can hide time. If a robot needs five minutes of careful motion to put away one cup, it may be technically impressive and practically annoying. Buyers should care about task time, noise, battery drain, and recovery steps.

That is why the best use of simulation is not as a replacement for real testing. It is a way to make real testing less random. Train across many simulated kitchens, find weak spots cheaply, then prove the final behavior in real homes.

How this changes the way to compare home robots

The old home-robot comparison was mostly hardware: height, weight, price, battery, sensors, payload, and whether it has arms. Those specs still matter. A 30 kg soft humanoid like NEO, a 46 kg mobile manipulator like Stretch 4, a compact $13,500 Unitree G1, and a research robot like Reachy 2 are not the same kind of product.

But the next comparison layer is training evidence. A robot with weaker-looking hardware but excellent task training may beat a more impressive robot that only has staged demos. A mobile manipulator with honest drawer, cabinet, and clutter benchmarks may be more credible than a humanoid with a beautiful walking video.

For ui44 comparisons, that means the useful future database fields are not just "has arms" or "supports ROS 2." They are things like:

- documented simulation support

- supported simulators and model fidelity

- household-scene benchmarks

- manipulation success rates

- recovery behavior after failed grasps

- number of real homes or pilots tested

- privacy model for training data

- published failure modes

Some robots already expose pieces of this. Reachy 2 lists simulation support through Gazebo and MuJoCo. Stretch 4 keeps an open ROS 2/Python developer model and reference autonomy demos. Unitree G1 supports ROS 2 and development use. Figure 03 talks more about Helix VLA and industrial deployment than home-scene simulation. SwitchBot onero H1 makes broad household VLA claims, but detailed public validation is still limited.

That does not make one robot "best." It tells you what evidence is missing.

So, will simulated kitchens train the first useful home robots?

Probably not by themselves. But they may be one of the reasons the first useful home robots stop looking like fragile demos.

The most credible path is hybrid: simulation for scale, real homes for truth. A robot should practice thousands of drawer pulls, cabinet reaches, object placements, awkward grasps, and navigation failures in simulation before it ever bumps around a buyer's kitchen. Then it should prove the behavior in real homes with clear numbers.

That is especially important for expensive early robots. If someone is considering a $20,000 NEO pre-order, a $29,950 Stretch 4 research or assistive platform, or a five-figure humanoid development robot, they should not be satisfied with a polished clip. They should ask where the robot practiced, what failed, what transferred, and what data was used.

The home-robot industry does not need prettier demos. It needs chores that work after the demo table gets messy. Simulated kitchens are not the whole answer, but they are a much better question to ask.

For deeper comparisons, start with the robot pages for 1X NEO, Hello Robot Stretch 4, Unitree G1, and Reachy 2, or use /compare to line up specs side by side.

Database context

Use this article as a privacy verification workflow

Turn the article into a real verification pass

Will Simulated Kitchens Train Home Robots? already points you toward 6 linked robots, 6 manufacturers, and 4 countries inside the ui44 database. That matters because strong buyer guidance is easier to apply when you can move immediately from a claim or warning into concrete product pages, manufacturer directories, component explainers, and country-level context instead of treating the article as an isolated opinion piece. The fastest next step is to turn the article into a shortlist workflow: open the linked robot pages, verify which specs are actually published for those models, then compare the surrounding manufacturer and component context before you decide whether the underlying claim changes your buying plan.

For this topic, the useful discipline is to separate the editorial lesson from the catalog evidence. The article gives you the framing, but the robot pages tell you what each product actually ships with today: sensor stack, connectivity methods, listed price, release timing, category, and support-relevant compatibility notes. The manufacturer pages then show whether you are looking at a one-off launch, a broader lineup pattern, or a company that spans multiple categories. That layered workflow reduces the risk of buying on a single marketing phrase or a single support FAQ.

Use the robot pages to confirm which products actually expose cameras, microphones, Wi-Fi, or voice systems, then use the manufacturer pages to decide how much of the privacy question seems product-specific versus brand-wide. On this route cluster, NEO, Stretch 4, and Figure 03 form the fastest reality check. If you want a quick working shortlist, open Compare NEO, Stretch 4, and Figure 03 next, then keep this article open as the reasoning layer while you compare structured data side by side.

Practical Takeaway

Every robot, manufacturer, category, component, and country reference below resolves to a real ui44 page, keeping the follow-up path grounded in database records rather than generic advice.

Suggested next steps in ui44

- Open NEO and note the listed sensors, connectivity methods, and voice stack before you interpret any policy claim.

- Cross-check the wider brand context on 1X Technologies so you can see whether the privacy question touches one model or a broader lineup.

- Use the linked component pages to confirm how common the relevant sensors and connectivity layers are across the database.

- Keep a short note of which policy layers you checked, which device features are actually present on the robot page, and which items still depend on region- or app-level confirmation.

- Finish with Compare NEO, Stretch 4, and Figure 03 so the policy reading sits next to structured product data.

Database context

Robot profiles worth opening next

Use the linked product pages as the evidence layer

The linked robot pages are where this article becomes operational. Instead of asking whether the headline is interesting, use the robot entries to inspect the actual mix of sensors, connectivity options, batteries, pricing, release timing, and stated capabilities attached to the products mentioned in the article. That is the easiest way to see whether the warning or opportunity described here affects one product family, a specific design pattern, or an entire buying lane.

NEO

1X Technologies · Humanoid · Pre-order

NEO is tracked on ui44 as a pre-order humanoid robot from 1X Technologies. The database currently records a listed price of $20,000, a release date of 2025-10-28, ~4 hours battery life, Not disclosed charging time, and a published stack that includes RGB Cameras, Depth Sensors, and Tactile Skin plus Wi-Fi and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether NEO combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Household Chores, Tidying Up, and Safe Human Interaction with any cloud, app, or voice layers.

Stretch 4

Hello Robot · Home Assistants · Available

Stretch 4 is tracked on ui44 as a available home assistants robot from Hello Robot. The database currently records a listed price of $29,950, a release date of 2026-05-12, 8 hours (light CPU load) battery life, Not officially disclosed charging time, and a published stack that includes Wide-FOV depth sensing, High-resolution RGB cameras, and Calibrated RGB + depth perception plus its listed connectivity stack.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Stretch 4 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Mobile Manipulation, Omnidirectional Indoor Mobility, and Autonomous Mapping and Navigation with any cloud, app, or voice layers.

Figure 03 is tracked on ui44 as a active humanoid robot from Figure AI. The database currently records a listed price of Price TBA, a release date of 2025-10-09, ~5 hours battery life, Not disclosed charging time, and a published stack that includes Stereo Vision, Depth Cameras, and Force Sensors plus Wi-Fi and Bluetooth.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Figure 03 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Complex Manipulation, Warehouse Work, and Manufacturing Tasks with any cloud, app, or voice layers.

G1 is tracked on ui44 as a available humanoid robot from Unitree. The database currently records a listed price of $13,500, a release date of 2024, ~2 hours battery life, Not disclosed charging time, and a published stack that includes Depth Camera, 3D LiDAR, and 4 Microphone Array plus Wi-Fi 6 and Bluetooth 5.2.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether G1 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Bipedal Walking, Object Manipulation, and Dexterous Hands (optional Dex3-1) with any cloud, app, or voice layers.

Reachy 2

Pollen Robotics · Research · Active

Reachy 2 is tracked on ui44 as a active research robot from Pollen Robotics. The database currently records a listed price of Price TBA, a release date of 2024, Not disclosed battery life, Not disclosed charging time, and a published stack that includes Stereo RGB Cameras (fish-eye), Time-of-Flight Depth Sensor (OAK-FFC ToF 33D), and RGB-D Camera (Orbbec Gemini 336) plus Wi-Fi and Ethernet.

For privacy-focused reading, this page matters because it shows the concrete device surface behind the policy discussion. Use it to verify whether Reachy 2 combines sensors and connectivity in a way that could change the in-home data footprint, and compare the listed capabilities such as Object manipulation (pick and place), VR teleoperation, and Autonomous navigation with any cloud, app, or voice layers.

Database context

Manufacturer context behind the article

Check whether this is one product story or a broader company pattern

Manufacturer pages add the privacy context that individual product pages cannot show on their own. They help you check whether cameras, microphones, cloud accounts, app controls, and policy assumptions appear across a broader lineup or stay tied to one specific product story.

1X Technologies

ui44 currently tracks 2 robots from 1X Technologies across 1 category. The company is grouped under Norway, and the current catalog footprint on ui44 includes NEO, EVE.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Hello Robot

ui44 currently tracks 2 robots from Hello Robot across 1 category. The company is grouped under USA, and the current catalog footprint on ui44 includes Stretch 3, Stretch 4.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Home Assistants as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Figure AI

ui44 currently tracks 2 robots from Figure AI across 1 category. The company is grouped under USA, and the current catalog footprint on ui44 includes Figure 03, Figure 02.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Unitree

ui44 currently tracks 2 robots from Unitree across 1 category. The company is grouped under China, and the current catalog footprint on ui44 includes H1, G1.

That wider brand context matters because privacy questions rarely stop at one FAQ page. A manufacturer route helps you see whether the article is centered on one premium model or on a company that has several relevant products and therefore more than one place where the same policy or app assumptions might matter. The category mix here currently points toward Humanoid as the most useful next route if you want to see whether this article reflects a wider pattern inside the brand.

Database context

Broaden the scan without leaving the database

Categories, components, and countries add the wider context

Category framing

Category pages are useful when the article touches a buying pattern that shows up across brands. A category route helps you confirm whether the linked products sit in a narrow niche or whether the same question should be tested across a larger field of alternatives.

Humanoid

The Humanoid category page currently groups 81 tracked robots from 58 manufacturers. ui44 describes this lane as: Full-size bipedal humanoid robots designed to work alongside humans. From factory floors to household tasks, these machines represent the cutting edge of robotics.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include NEO, EVE, Mornine M1.

Home Assistants

The Home Assistants category page currently groups 13 tracked robots from 12 manufacturers. ui44 describes this lane as: Arm-based household helpers — laundry folders, kitchen robots, and mobile manipulators that handle physical tasks at home.

That makes the category route a practical follow-up when you want to check whether the products linked in this article are typical for the lane or whether they sit at one edge of the market. Useful starting examples currently include Robody, Futuring 2 (F2), Stretch 3.

Country and ecosystem context

Country pages give extra context when support practices, launch sequencing, regulatory posture, or manufacturer mix matter. They are not a substitute for model-level verification, but they do help you see which ecosystems cluster together and which manufacturers sit in the same regional field when you broaden the search beyond the article headline.

Norway

The Norway route currently groups 2 tracked robots from 1 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like 1X Technologies make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

USA

The USA route currently groups 18 tracked robots from 12 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like Boston Dynamics, Figure AI, Hello Robot make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

China

The China route currently groups 53 tracked robots from 15 manufacturers in ui44. That gives you a useful regional lens when the article points toward support practices, launch sequencing, or brand clusters that may share similar ecosystem assumptions.

On the current route, manufacturers like AGIBOT, Unitree Robotics, Roborock make the page a good way to broaden the scan without losing the regional context that often shapes availability, documentation style, and adjacent alternatives.

Database context

Questions to answer before you move from reading to buying

A follow-up FAQ built from the entities already linked in this article

Frequently Asked Questions

Which page should I open first after reading “Will Simulated Kitchens Train Home Robots?”?

Start with NEO. That gives you a concrete product anchor for the article’s main claim. From there, branch into the manufacturer and component pages so you can tell whether the article is describing one specific model, a repeated brand pattern, or a wider technology issue that affects multiple shortlist options.

How do the manufacturer pages change the buying decision?

1X Technologies help you zoom out from one article and one product. On ui44 they show lineup breadth, category spread, and the neighboring robots tied to the same company. That context is useful when you are deciding whether a risk belongs to a single model, whether it shows up across a brand’s portfolio, and whether you should keep looking at alternatives before committing.

When should I switch from reading to side-by-side comparison?

Move into Compare NEO, Stretch 4, and Figure 03 as soon as you understand the article’s main warning or promise. The article explains what to watch for, but the compare view is where you can check whether price, status, battery life, connectivity, sensors, and category fit still make the robot a good match for your own home and budget.

Database context

Where to go next in ui44

Keep the research chain inside the database

If you want to keep going, these follow-on pages give you the cleanest expansion path from article to research session. Open the comparison route first if you are deciding between products today. Open the manufacturer, category, and component routes if you still need to understand the broader pattern behind the claim.

Written by

ui44 Team

Published May 14, 2026

Share this article

Open a plain share link on X or Bluesky. No embeds, no widgets, no cookie baggage.