Why it matters

What it tends to unlock

Higher-level planning, adaptation, and interaction quality, richer autonomy claims that can change the shortlist materially, and more flexible task handling when the vendor stack is mature enough.

Large language model integration, visual perception systems, autonomous locomotion appears across 1 tracked robots, concentrated in Humanoid. Use this page to understand why the signal matters, who relies on it most, and which live profiles deserve the first comparison click.

Tracked robots

1

Ready now

1

Manufacturers

1

Public prices

0

Why it matters

Higher-level planning, adaptation, and interaction quality, richer autonomy claims that can change the shortlist materially, and more flexible task handling when the vendor stack is mature enough.

What to verify

What runs on-device versus in the cloud, how branded AI labels map to real user-facing behavior, and whether updates and latency tradeoffs fit the intended job.

Coverage

The heaviest concentration is in Humanoid (1). Top manufacturers include Fourier (1).

Research brief

The useful questions here are how common Large language model integration, visual perception systems, autonomous locomotion really is, which robot classes depend on it, and which live profiles are worth opening before you compare the whole stack.

Verified 30d

1

1 in the last 90 days

Top category

Humanoid

1 tracked robots

Paired most often with

1 Realsense Camera, 1 Ring-shaped Microphone Sensor, and 6 RGB cameras (pure vision perception solution)

Decision brief

Where it helps most

What to validate

Evidence basis

Source pack

Use the structure first: which categories lean on Large language model integration, visual perception systems, autonomous locomotion, which manufacturers repeat it, and what usually ships beside it.

Lead category

1 tracked robots currently anchor this label.

Most repeated manufacturer

1 tracked robots make this the clearest manufacturer-level signal on the route.

Most common adjacent signal

1 shared robots pair this component with 1 Realsense Camera.

| # | Name | Usage |

|---|---|---|

| 1 | Humanoid | 1 robot |

| # | Name | Usage |

|---|---|---|

| 1 | Fourier | 1 robot |

| # | Name | Shared robots |

|---|---|---|

| 1 | 1 Realsense Camera | 1 robot |

| 2 | 1 Ring-shaped Microphone Sensor | 1 robot |

| 3 | 6 RGB cameras (pure vision perception solution) | 1 robot |

| 4 | Ethernet | 1 robot |

| 5 | Force/Torque Sensors | 1 robot |

| 6 | IMU | 1 robot |

How to read the market

Category concentration tells you where the component is actually doing work, manufacturer repetition shows whether the signal is market-wide or vendor-specific, and pairings reveal which neighboring technologies usually ship alongside it.

The old card wall is replaced with a featured first-click strip and a dense inventory table so the route behaves like a serious directory.

Directory briefing

Open the clearest profiles first, then sweep the full inventory in a denser table. Featured cards are selected by readiness, image quality, and official source availability, so the first click is usually the most informative one.

Ready now

1

Public price

0

Official links

1

Featured now

1

How to scan this directory

Best first clicks

These robots score highest on readiness, public detail quality, and image clarity, making them the fastest way to understand how Large language model integration, visual perception systems, autonomous locomotion shows up in practice.

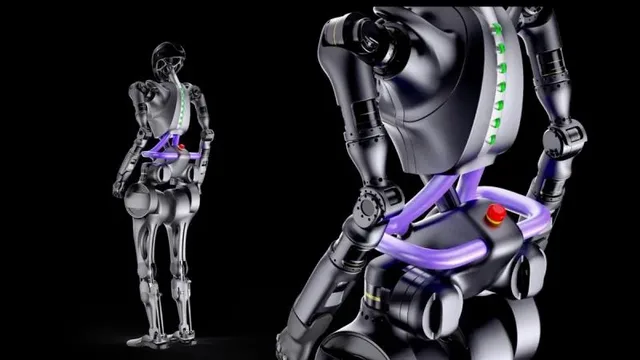

The Fourier GR-1 is a general-purpose humanoid robot unveiled in July 2023 at the World Artificial Intelligence Conference in Shanghai. Standing 1.65 meters tall and weighing 55 kg, it features up to 44 degrees of freedom and a peak joint torque of 230 N·m for agile bipedal locomotion. Designed for mass production, the GR-1 is aimed at research, rehabilitation, and real-world service applications. It can walk at up to 5 km/h and carry payloads approaching its own body weight. Fourier (formerly Fourier Intelligence), originally a medical and rehabilitation robotics company, developed the GR-1 as its first general-purpose humanoid platform; the current official product page describes LLM-powered interaction, one RealSense vision camera, one ring-shaped microphone sensor, and a six-RGB-camera pure-vision perception solution.

Public price

Price TBA

No public list price (contact sales)

Battery

2 hours (Humanoid.Guide; not manufacturer-published)

Shortlist read

Active in the catalog with enough detail to review immediately.

Compact mobile scan: status, price, standout context, and links stay visible without sideways scrolling.

Fourier · Humanoid

Price

Price TBA

Standout

Battery · 2 hours (Humanoid.Guide; not manufacturer-published)

Quick answers

The short version of what this label means in the ui44 catalog, where it matters, and how to compare it without over-reading the marketing copy.

Large language model integration, visual perception systems, autonomous locomotion currently appears on 1 tracked robots across 1 manufacturers. That makes this route useful for both deep research and fast shortlist scanning, not just one-off editorial reading.

The strongest concentration is in Humanoid (1). Category mix is the fastest clue for whether this component behaves like baseline plumbing or a more selective differentiator.

1 of the 1 tracked profiles are currently marked Available or Active. That means the label has live market relevance here, but you should still open the profiles with public pricing or official links first before treating it as a clean buyer signal.

Start with readiness, official source quality, and the standout spec column in the inventory table. On component routes, those three signals usually remove weak profiles faster than reading every descriptive paragraph.

The strongest shared-stack signals here are 1 Realsense Camera (1), 1 Ring-shaped Microphone Sensor (1), and 6 RGB cameras (pure vision perception solution) (1). Use those pairings to branch into adjacent component pages when one label is too narrow for the decision.

0 matching robots currently expose public pricing. That is enough to create directional context, but not enough to treat one price bracket as the whole market. Use the directory to find the transparent profiles first, then widen the sweep.

Start with Fourier (1). Repetition across manufacturers is often the clearest signal that the component is part of a stable market pattern rather than a one-off marketing callout.

The original long-form component research is still here, but collapsed so the main route can prioritize hierarchy and scan speed.

The baseline explanation of what Large language model integration, visual perception systems, autonomous locomotion is, why it matters, and how to think about it before comparing implementations.

Large language model integration, visual perception systems, autonomous locomotion is a ai component found in 1 robot tracked in the ui44 Home Robot Database. As a ai technology, Large language model integration, visual perception systems, autonomous locomotion plays a specific role in enabling robot perception, interaction, or operation depending on its implementation in each platform.

The AI platform is the cognitive engine of a robot. It encompasses the machine learning models, decision-making algorithms, and processing infrastructure that enable a robot to interpret sensor data, plan actions, and interact naturally with humans.

In the ui44 database, Large language model integration, visual perception systems, autonomous locomotion is categorized under AI components. For a comprehensive explanation of all component types, consult the components glossary.

The AI platform fundamentally determines a robot's intelligence, adaptability, and user experience. The AI stack also affects responsiveness, privacy, and the robot's ability to receive meaningful software updates.

Advanced AI handles unexpected situations and improves over time

Enables natural language understanding for voice commands

On-device vs. cloud processing affects both privacy and capability

Used in 1 robot across 1 category — Humanoid, indicating specialized use across the robotics industry.

Robot AI systems typically combine several layers that work together to transform raw data into intelligent behavior. Modern robots increasingly use neural networks with some processing on-device and some in the cloud.

Perception AI

Converts raw sensor data into understanding — recognizing objects, faces, and spaces

Planning AI

Decides what actions to take based on current understanding and goals

Control AI

Executes planned movements with precision, managing motors and actuators

Interaction AI

Understands and generates human communication — voice, gestures, text

Large language model integration, visual perception systems, autonomous locomotion Integration

Implementation varies by robot platform and manufacturer. Each robot integrates Large language model integration, visual perception systems, autonomous locomotion differently depending on system architecture, use case, and target tasks. Integration with other onboard AI subsystems and the main processing unit determines real-world performance.

Deeper technical framing, matched technology profiles, and the longer use-case treatment for Large language model integration, visual perception systems, autonomous locomotion.

In-depth technical analysis of 3 technology domains relevant to this component

While the sections above cover general ai principles, this analysis focuses on the particular technology domains relevant to Large language model integration, visual perception systems, autonomous locomotion based on its implementation characteristics. We cover Large Language Model Integration, SLAM & Autonomous Navigation AI, Computer Vision & Object Recognition.

Large language models (LLMs) represent a paradigm shift in robot AI capabilities. By integrating LLMs like GPT, Claude, or similar models, robots gain the ability to understand and generate natural language at a level that far exceeds traditional natural language processing approaches. This enables genuinely conversational interactions where the robot can handle ambiguous requests, follow complex multi-step instructions, explain its own reasoning, and engage in contextual dialogue that references previous interactions.

LLM integration in robotics typically follows one of two architectures. Cloud-based integration sends the user's transcribed speech to a remote LLM API and returns the generated response, offering access to the most capable models but introducing network latency and privacy considerations. Edge-based integration runs smaller, optimized language models directly on the robot's processor, providing faster responses and complete data privacy at the cost of reduced model capability. Some robots use a hybrid approach: handling simple, common requests on-device for low-latency responses while routing complex queries to cloud-based models for more sophisticated processing.

The practical impact of LLM integration extends beyond conversation. LLMs can serve as a robot's task planning layer, translating natural language instructions like 'clean up the living room and then check if the back door is locked' into a sequence of executable robot actions. They can also function as a reasoning layer for anomaly detection — understanding the semantic significance of sensor data (recognizing that a smoke alarm sound requires urgent alert rather than just logging an audio event). As the robotics industry moves toward foundation models that combine language understanding with physical world modeling, LLM integration is likely to become a standard rather than premium feature.

Simultaneous Localization and Mapping (SLAM) is the AI backbone of autonomous robot navigation. SLAM algorithms solve the chicken-and-egg problem of needing a map to determine the robot's position, while simultaneously needing to know the position to build the map. By processing continuous sensor data — from LiDAR, cameras, wheel encoders, and IMUs — SLAM algorithms construct and continuously refine an environmental map while tracking the robot's position within it.

Modern robot SLAM implementations use graph-based optimization, where the map is represented as a graph of sensor observations and spatial relationships that are jointly optimized to minimize overall error. Visual SLAM (vSLAM) uses camera imagery, identifying and tracking visual features like corners, edges, and textures. LiDAR SLAM uses point cloud matching to determine the robot's displacement between scans. Multi-sensor SLAM fuses both visual and geometric data for more robust localization. The choice of SLAM approach affects the robot's mapping accuracy, computational requirements, and resilience to challenging environments.

Path planning algorithms build on the SLAM-generated map to compute efficient, collision-free routes from the robot's current position to its destination. These range from classical graph search algorithms (A*, Dijkstra) that find optimal paths on grid maps, to sampling-based planners (RRT, PRM) that handle complex high-dimensional planning problems, to learned planners that use reinforcement learning to discover navigation strategies from experience. Dynamic obstacle avoidance layers handle moving people, pets, and objects that were not present in the stored map, combining real-time sensor data with predictive models of how obstacles might move.

Computer vision AI transforms raw camera imagery into semantic understanding of the robot's environment. Object detection algorithms identify and locate specific items in the visual field — furniture, people, pets, cables, shoes, and other common household objects. Semantic segmentation classifies every pixel in the image into categories (floor, wall, furniture, person, pet), providing a complete scene understanding rather than just identifying individual objects. Instance segmentation goes further, distinguishing between individual objects of the same class (this chair vs. that chair).

Modern robot vision systems use pre-trained deep learning models fine-tuned on robotics-specific datasets. Base models trained on millions of internet images provide general visual understanding, which is then specialized through fine-tuning on images captured from the robot's perspective — typically low to the ground, with specific lighting conditions and viewing angles that differ from standard photography datasets. Transfer learning allows manufacturers to develop capable vision systems without collecting the enormous datasets that would be required to train models from scratch.

Practical object recognition in home environments presents unique challenges. Household items appear in highly variable conditions — different lighting throughout the day, partial occlusion by furniture or other objects, and extreme pose variations (a shoe on its side looks very different from one standing upright). Pet detection must handle multiple breeds with dramatically different appearances. Person detection must work with varying clothing, positions (standing, sitting, lying down), and distances. The best robot vision systems achieve these capabilities through extensive training data diversity and real-world testing, resulting in recognition systems that are robust enough for reliable autonomous operation in the unpredictable home environment.

In the ui44 database, Large language model integration, visual perception systems, autonomous locomotion is currently tracked exclusively in the GR-1 by Fourier. This humanoid robot integrates Large language model integration, visual perception systems, autonomous locomotion as part of a total technology stack comprising 8 components: 5 sensors, 2 connectivity modules, and a Large language model integration, visual perception systems, autonomous locomotion AI platform.

The Fourier GR-1 is a general-purpose humanoid robot unveiled in July 2023 at the World Artificial Intelligence Conference in Shanghai. Standing 1.65 meters tall and weighing 55 kg, it features up to 44 degrees of freedom and a peak joint torque of 230 N·m for agile bipedal locomotion. Designed for mass production, the GR-1 is aimed at research, rehabilitation, and real-world service applications.…

Visit the full GR-1 specification page for complete technical details and availability information.

Beyond the high-level overview, understanding the technical foundations of ai technologies like Large language model integration, visual perception systems, autonomous locomotion helps buyers and researchers evaluate implementations more critically.

Robot AI systems are built on layers of computational models, each handling different aspects of intelligence.

AI performance trade-offs — the accuracy-latency-energy triangle — fundamentally shape design decisions.

The AI landscape in robotics has undergone several paradigm shifts.

Classical robotics: hand-crafted rules and explicit programming

Machine learning era: data-driven approaches — learning from examples

Deep learning: end-to-end systems learning directly from raw sensor data

Foundation models & LLMs: broad world knowledge and natural language understanding

Current frontier: embodied AI — models that understand physics and spatial reasoning

Current robot AI has significant limitations that buyers should understand.

Key application domains for ai technologies like Large language model integration, visual perception systems, autonomous locomotion.

AI enables robots to make decisions in real time without human input. Whether it's choosing the optimal cleaning path, deciding when to return to the charging dock, or determining how to respond to an unexpected obstacle, the AI platform processes sensor data and selects the best course of action from its learned repertoire.

Modern AI platforms, especially those leveraging large language models, allow robots to understand and respond to conversational commands. This goes beyond simple keyword recognition — advanced AI can handle ambiguous requests, follow multi-step instructions, and maintain context across a conversation.

Some AI platforms allow robots to improve their performance over time by learning from experience. A robot might learn the most efficient cleaning route for your specific home, adapt to your daily routines, or improve its object recognition based on items it encounters repeatedly.

AI can monitor the robot's own systems, predicting when components might fail or need maintenance. By analyzing patterns in motor performance, battery degradation, and sensor accuracy, AI-equipped robots can alert users to potential issues before they cause problems.

AI platforms enable sophisticated task planning — breaking complex goals into executable steps, scheduling activities around user preferences, and re-planning when circumstances change. This capability is essential for robots that handle multiple responsibilities or operate on complex schedules.

Visit each robot's detail page to see which capabilities are available on specific models.

Manufacturer mix, specs context, price context, category overlap, and adjacent components worth branching into next.

Large language model integration, visual perception systems, autonomous locomotion spans 1 robot category — from consumer to research platforms.

Technologies most often paired with Large language model integration, visual perception systems, autonomous locomotion across 1 robot.

Browse the full components directory or see the components glossary for detailed explanations of each technology.

347 other ai technologies tracked in ui44, ranked by adoption.

2 robots

2 robots

1 robot

1 robot

1 robot

1 robot

1 robot

1 robot

Browse all AI components or use the robot comparison tool to evaluate how different ai configurations perform across specific robot models.

The AI landscape in robotics is undergoing a transformation driven by advances in large language models, multimodal AI, and embodied intelligence research.

Foundation models for robotics

Purpose-built models that understand physics, spatial reasoning, and manipulation — enabling generalization to new tasks

On-device vs. cloud debate

Privacy-conscious buyers prefer local processing; cloud-connected robots benefit from more powerful, frequently updated models

Open-source frameworks

ROS 2 and PyTorch for robotics are lowering barriers, enabling more manufacturers to develop capable AI platforms

Industry Adoption Snapshot

Large language model integration, visual perception systems, autonomous locomotion is adopted by 1 robot from 1 manufacturer in the ui44 database, providing a data-driven view of real-world deployment patterns.

Certifications carried by robots incorporating Large language model integration, visual perception systems, autonomous locomotion, indicating compliance with safety, EMC, and quality standards.

Platform compatibility, voice integration, and AI capabilities across robots with Large language model integration, visual perception systems, autonomous locomotion.

The long-form buyer, maintenance, and troubleshooting material kept available without forcing it into the main scan path.

If Large language model integration, visual perception systems, autonomous locomotion is an important factor in your robot selection, here are key considerations to guide your decision.

On-device vs. cloud

On-device AI works without internet but may be less powerful

Learning capability

Can the robot improve and adapt to your specific home over time?

Natural language

How well does it understand conversational voice commands?

Update frequency

Does the manufacturer regularly ship AI improvements?

Privacy

What data is sent to the cloud, and how is it protected?

A component is only as good as its integration. Check how the manufacturer has incorporated Large language model integration, visual perception systems, autonomous locomotion into the overall robot design and software stack.

Review what other ai technologies are paired with Large language model integration, visual perception systems, autonomous locomotion in each robot — see the related components section.

Make sure the robot's category matches your use case. Large language model integration, visual perception systems, autonomous locomotion serves different roles in different robot types.

Consider the manufacturer's reputation for software updates, support, and component reliability.

Compare Before You Buy

Use the ui44 comparison tool to evaluate robots with Large language model integration, visual perception systems, autonomous locomotion side by side.

AI components present a unique maintenance profile because much of their capability is defined by software rather than hardware. This means AI performance can improve through updates but is also vulnerable to degradation if cloud services are discontinued or software support ends. Understanding the AI maintenance model is critical for assessing a robot's long-term value proposition.

The hardware that runs AI workloads — processors, memory, and neural network accelerators — is highly durable solid-state electronics. Physical failure of AI processing hardware is rare under normal operating conditions.

AI maintenance primarily involves keeping the robot's software stack updated. Firmware updates often include improved AI models, bug fixes for edge cases in perception or navigation, and new capabilities unlocked by algorithmic improvements.

AI future-proofing depends heavily on the manufacturer's ongoing investment in software development and the robot's computational headroom. Robots designed with more processing power than initially needed have room to run improved AI models in future updates.

For the 1 robot in the ui44 database using Large language model integration, visual perception systems, autonomous locomotion, we recommend checking the individual robot pages for manufacturer-specific maintenance guidance and support documentation. Each manufacturer has different support policies, update frequencies, and warranty terms that affect the long-term ownership experience of their ai technologies.

AI-related issues in robots often manifest as degraded performance rather than complete failures. The robot may navigate less efficiently, misrecognize objects, respond slowly to commands, or make decisions that seem illogical. Diagnosing AI issues requires understanding whether the problem is in the AI software, the input data feeding the AI, or the processing hardware running the AI models.

Likely Causes

Resolution

Likely Causes

Resolution

Likely Causes

Resolution

For model-specific troubleshooting, visit the individual robot pages for the 1 robot using Large language model integration, visual perception systems, autonomous locomotion. Each manufacturer provides model-specific support resources and diagnostic tools for their ai implementations.

What to do next

This page should hand you off to the next useful comparison step, not strand you at the bottom of a long detail route.

Widen the layer

Open the full ai workbench when Large language model integration, visual perception systems, autonomous locomotion is only one part of the decision and you need the broader market map.

Side-by-side check

Move from label-level research into direct robot comparison once you know which profiles are documented well enough to trust.

Adjacent signal

This is the most common neighboring component on robots that already use Large language model integration, visual perception systems, autonomous locomotion, so it is the fastest next branch if you need stack context.