Vision-Language-Action Models Are Replacing Modular Robotics Pipelines

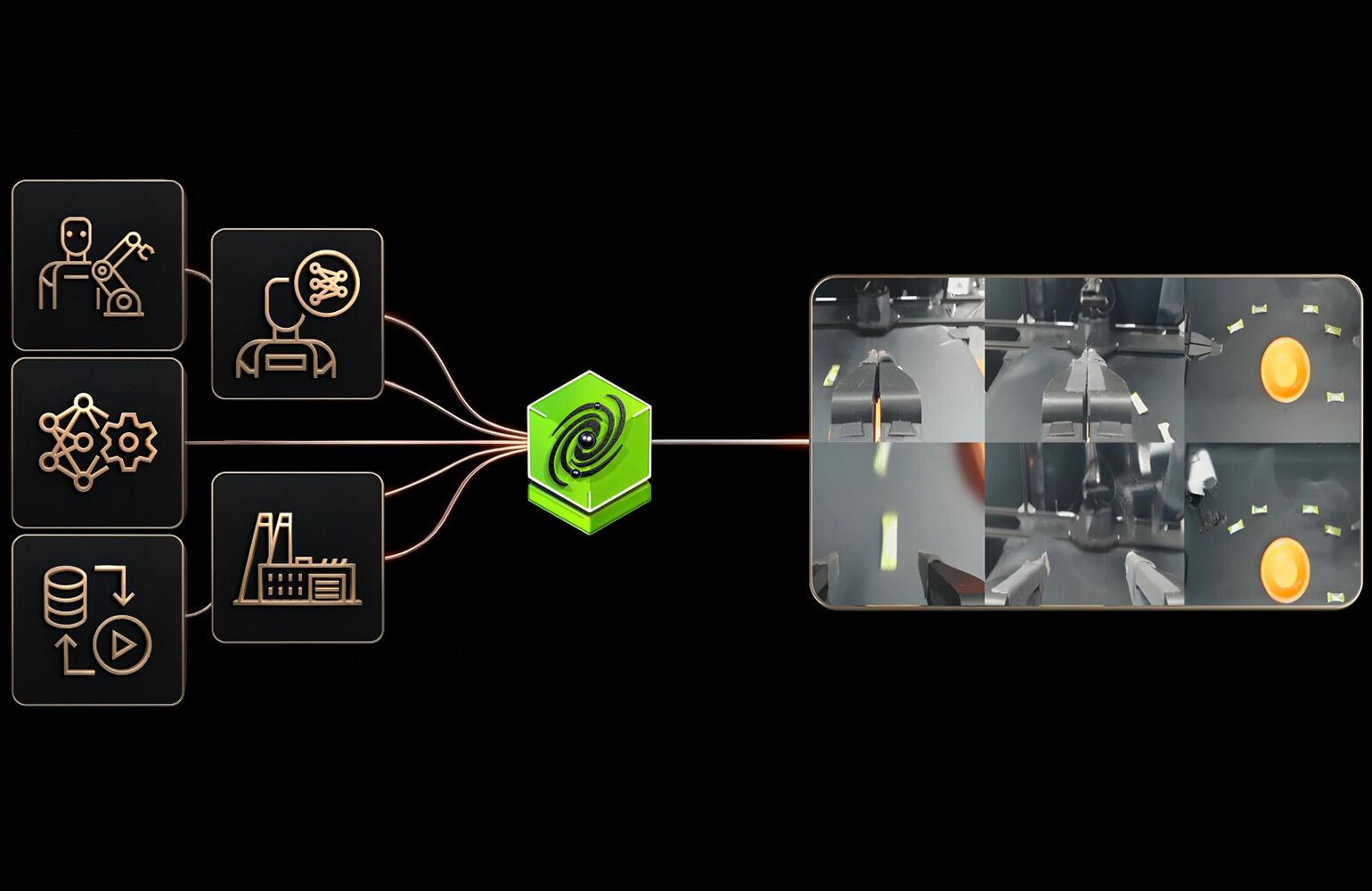

A new class of AI models called vision-language-action (VLA) systems is changing how humanoid robots process instructions and interact with the world. Instead of separate modules for seeing, planning, and moving, VLAs like Figure AI's Helix, NVIDIA's GR00T N1, and Google DeepMind's RT-1 combine vision, language understanding, and motor control into a single end-to-end model. Helix uses a dual-system design — a large vision-language backbone handles high-level reasoning while a smaller action model runs at high frequency for precise manipulation. GR00T N1 takes a generalist approach, training across multiple robot types and tasks. The practical result: robots that can follow natural language instructions, carry out multi-step tasks, and adapt to new environments without hand-tuned pipelines. Several teams have demonstrated on-device deployment with reduced latency. The shift matters because it moves humanoid robots closer to general-purpose assistants that understand what you ask and can actually do it.